Image: http://www.timemachinego.com/linkmachinego/2002/08/

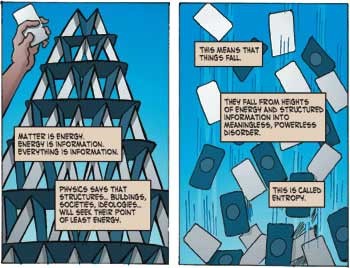

"Entropy should not and does not depend on our perception of order in the system. The amount of heat a system holds for a given temperature does not change depending on our perception of order. Entropy, like pressure and temperature is an independent thermodynamic property of the system that does not depend on our observation.

Entropy As Diversity

A better word that captures the essence of entropy on the molecular level is diversity. Entropy represents the diversity of internal movement of a system. The greater the diversity of movement on the molecular level, the greater the entropy of the system. Order, on the other hand, may be simple or complex. A living system is complex. A living system has a high degree of order AND an high degree of entropy. A raccoon has more entropy than a rock. A living, breathing human being, more than a dried up corpse."

http://www.science20.com/train_thought/blog/entropy_not_disorder-75081

"Syntropy is another word for negative entropy, or negentropy (also extropy). I prefer syntropy because it is a positive term for an otherwise double negative phrase meaning the absence of an absence (or minus the minus of order). Syntropy might best be thought of as the "capacity for entropy," and increased certainty and structure. A technological or living system acts as an efficient drain for entropy -- the more organized, structured, and complex the organization, the faster the system can generate entropy. In other words the more syntropic it is, the more efficient it is in creating entropy. At the same time, the creation of entropy is what you get with the expideture of energy, so this "urge" to drain entropy becomes a pump for order!"

Image: http://www.kk.org/thetechnium/archives/2009/01/the_cosmic_gene.php

"Things do not change; we change." - Henry David Thoreau

"Therefore, the seeker after the truth is not one who studies the writings of the ancients and, following his natural disposition, puts his trust in them, but rather the one who suspects his faith in them and questions what he gathers from... them, the one who submits to argument and demonstration, and not to the sayings of a human being whose nature is fraught with all kinds of imperfection and deficiency. Thus the duty of the man who investigates the writings of scientists, if learning the truth is his goal, is to make himself an enemy of all that he reads, and, applying his mind to the core and margins of its content, attack it from every side. He should also suspect himself as he performs his critical examination of it, so that he may avoid falling into either prejudice or leniency." - Ibn al-Haytham

"In mathematics, Ibn al-Haytham builds on the mathematical works of Euclid and Thabit ibn Qurra, and goes on to systemize infinitesimal calculus, conic sections, number theory, and analytic geometry after linking algebra to geometry."

http://www.newworldencyclopedia.org/entry/Ibn_al-Haytham#Alhazen.27s_problem

"According to Merriam-Webster, logic is the science that deals with the principles and criteria of validity of inference and demonstration. It is the science of the formal principles of reasoning. A logic consists of a first order language of types, together with an axiomatic system and a model-theoretic semantics."

http://suo.ieee.org/IFF/metalevel/lower/ontology/ontology/version20021205.htm

Progressive Ontology Alignment for Meaning

Coordination: An Information-Theoretic Foundation:

http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.108.2226&rep=rep1&type=pdf

A Formal Model for Situated Semantic Alignment:

...http://www.dit.unitn.it/~p2p/RelatedWork/Matching/atencia07.pdf

Semantic Alignment of Context-Goal Ontologies:

Several distributed systems need to inter-operate and exchange information. Ontologies are gained the popularity in AI community as a means for enriching the description of information and make their context more explicit. Thus, to enable Interoperability between systems, it is necessary to align ontologies describing them in a sound manner. Our main interest is focused on ontologies describing systems functionalities. We treat these lasts as goals to achieve. In general, a goal is related to the realization of an action in a particular context. Therefore, we call ontologies describing goals and their context Context-Goal Ontologies. Most of the methodologies proposed to reach interoperability are semi automatic, they are based on probabilities or statistics and not on mathematical models. The purpose of this paper is to investigate an approach where the alignment of C-G Ontologies achieves an automatic and semantic interoperability between distributed systems based on a mathematical model "Information Flow".

http://ftp.informatik.rwth-aachen.de/Publications/CEUR-WS/Vol-390/paper6.pdf

TOWARDS INTENSIONAL/ EXTENSIONAL INTEGRATION BETWEEN ONTOLOGIES

This paper presents ongoing research in the field of extensional mappings between ontologies. Hitherto, the task of generating mapping between ontologies has been focused on the intensional level of ontologies. The term intensional level refers to the set of concepts that are included in an ontology. However, an ontology that has been created for a specific task or application needs to be populated with instances. These comprise the extensional level of an ontology. This particular level is being generally neglected during the ontologies’ integration procedure. Thus, although methodologies of geographic ontologies integration, ranging from alignment to true integration, have, in the course of years, presented a solid ground for information exchange, little has been done in exploring the relationships between the data. In this context, this research strives to set a framework for extensional mappings between ontologies using Information Flow.

http://www.isprs.org/proceedings/XXXVI/2-W40/16_XXXVI-2-W40.pdf

Information Flow based Ontology Mapping

The use of ontology for knowledge organization is a common way to solve semantic heterogeneity. But when the categories of the relative concepts of ontologies are different, the semantic interoperation will encounter new obstacles. A new theory model is needed to solve this kind of problem. Information flow (IF) theory seems to be a promising avenue to this end. In this paper, we analyze semantic heterogeneities among two data sources in distributed Xu Beihongpsilas Galleries and describe an instance based approach to accomplish semantic interoperation and IF based formulation process of the global semantics. The emphasis is how to use IF theory to discover concept and instance duality in knowledge sharing. In the end, the more interrelated problems are farther discussed.

http://ieeexplore.ieee.org/Xplore/login.jsp?url=http%3A%2F%2Fieeexplore.ieee.org%2Fiel5%2F4617324%2F4617326%2F04617435.pdf%3Farnumber%3D4617435&authDecision=-203

Semantic Alignment in the Context of Agent Interactions

We provide the formal foundation of a novel approach to tackle semantic heterogeneity in multi-agent communication by looking at semantics related to interaction in order to avoid dependency on a priori semantic agreements. We do not assume existence of any ontologies, neither local to interacting agents nor external to them, and we rely only on interactions themselves to resolve terminological mismatches. In the approach taken in this paper we look at the semantics of messages that are exchanged during an interaction entirely from an interaction-specific point of view: messages are deemed semantically related if they trigger compatible interaction state transitions—where compatibility means that the interaction progresses in the same direction for each agent, albeit their partial view of the interaction (their interaction model) may be more constrained than the actual interaction that is happening. Our underlying claim is that semantic alignment is often relative to the particular interaction in which agents are engaged in, and, that in such cases the interaction state should be taken into account and brought into the alignment mechanism.

http://www.cisa.inf.ed.ac.uk/OK/Publications/Semantic%20alignment%20in%20the%20context%20of%20agent%20interactions.pdf

Formal Method for Aligning Goal Ontologies

Many distributed heterogeneous systems interoperate and exchange information between them. Currently, most systems are described in terms of ontologies. When ontologies are distributed, the problem of finding related concepts between them arises. This problem is undertaken by a process which defines rules to relate relevant parts of different ontologies, called “Ontology Alignment.” In literature, most of the methodologies proposed to reach the ontology alignment are semi automatic or directly conducted by hand. In the present paper, we propose an automatic and dynamic technique for aligning ontologies. Our main interest is focused on ontologies describing services provided by systems. In fact, the notion of service is a key one in the description and in the functioning of distributed systems. Based on a teleological assumption, services are related to goals through the paradigm ‘Service as goal achievement’, through the use of ontologies of services, or precisely goals. These ontologies are called “Goal Ontologies.” So, in this study we investigate an approach where the alignment of ontologies provides full semantic integration between distributed goal ontologies in the engineering domain, based on the Barwise and Seligman Information Flow (noted IF) model.

http://www.springerlink.com/content/e6n1pl0277817615/

“With a roadmap in place, the participating agencies and their countries will benefit enormously from a comprehensive, global approach to space exploration.”

http://www.examiner.com/dc-in-washington-dc/international-cooperation-needed-for-space-exploration

"This article explores the pros and cons of both types of space exploration and hopefully will spark more discussion of this complex and highly political issue.

Unmanned Missions – going where no man has gone before - and maybe never will

Ro...botic space exploration has become the heavy lifter for serious space science. While shuttle launches and the International Space Station get all the media coverage, these small, relatively inexpensive unmanned missions are doing important science in the background.

Most scientists agree: both the shuttle (STS – Space Transport System) and the International Space Station are expensive and unproductive means to do space science.

NASA has long touted the space station as the perfect platform to study space and the shuttle a perfect vehicle to build it. However, as early as 1990, 13 different science groups rejected the space station citing huge expenses for small gains.

Shuttle disasters, first the Challenger followed by Columbia’s catastrophic reentry in February, 2003, have forced NASA to keep mum about crewed space exploration and the International Space Station is on hold.

The last important media event promoting manned flight was Senator John Glenn’s ride in 1998 – ostensibly to do research on the effects of spaceflight on the human body, but widely seen by scientists as nothing but a publicity stunt.

Since each obiter launch cost $420 million dollars in 1998, it was the world’s most expensive publicity campaign to date. Proponents say the publicity is needed to support space program funding. Scientific groups assert the same money could have paid for two unmanned missions that do new science - not repeat similar experiments already performed by earlier missions.

Indeed, why do tests on the effects of zero gravity on humans anyway when they can sit comfortably behind consoles directing robotic probes from Earth?

Space is a hostile place for humans. All their needs must be met by bringing a hospitable environment up from a steep gravity well, the cost of which is enormous. The missions must be planned to avoid stressing our fragile organisms. We need food, water and air requiring complicated and heavy equipment. All this machinery needs to be monitored, reducing an astronaut’s available time to carry out experiments. Its shear weight alone reduces substantially the useful payload.

The space shuttle is a hopelessly limited vehicle. It’s only capable of reaching low earth orbit. Worse, the space station it services is placed in the same orbit – one that is not ideal for any type of space science. Being so close to the Earth, gravity constantly tugs at the station making it unstable for fabrication of large crystals – part of NASA’s original plans but later nixed by the American Crystallographic Association.

To date, more than 20 scientific organizations worldwide have come out against the space station and are recommending the funds be used for more important unmanned missions.

NASA has gone so far as to create myths about economic spin-offs from manned spaceflight - the general idea being the enormous expense later results in useful technology that improves our lives. Items like Velcro, Tang and Teflon – popularly believed to have come from the space program or invented by NASA. There is only one problem: they did not.

Shuttle launches are expensive: very expensive. Francis Slakey, a PhD physicist who writes for Scientific American about space said, “The shuttle’s cargo bay can carry 23,000 kilos (51,000 lbs) of payload and can return 14,500 kilos back to earth. Suppose that NASA loaded the Shuttle’s cargo bay with confetti to be launched into space. If every kilo of confetti miraculously turned into gold during the return trip, the mission would still lose $270 million.” This was written in 1999 when a shuttle flight cost $420 million.

Currently, it’s estimated that just the shuttle program average cost per flight has been about $1.3 billion over lifetime and about $750 million per launch over its most recent five years of operations. This total includes development costs and numerous safety modifications. That means each shuttle launch could pay for 2 to 3 unmanned missions.

While recent failures have more than quadrupled success rates for unmanned missions, they still have managed to keep space programs alive – not just for the US, but Russia, Japan and China as well.

Mars Pathfinder and Mar Exploration Rovers have succeeded beyond the expectations of their designers and continue to deliver important data to earthbound scientists."

http://www.physorg.com/news8442.html

Part I [sections 2–4] draws out the conceptual links between modern conceptions of teleology and their Aristotelian predecessor, briefly outlines the mode of functional analysis employed to explicate teleology, and develops the notion of cybernetic organisation in order to distinguish teleonomic and teleomatic systems.... Part II is concerned with arriving at a coherent notion of intenti

http://philpapers.org/rec/CHRACS

Some Considerations Regarding Mathematical Semiosis:

http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.144.311&rep=rep1&type=pdf

More Than Life Itself: A Synthetic Continuation in Relational Biology:

http://www.ontoslink.com/index.php?page=shop.getfile&file_id=432&product_id=288&option=com_virtuemart&Itemid=79&lang=en

Complexity, Artificial Life and Self-Organizing Systems Glossary:

http://www.calresco.org/glossary.htmSee More

Praefatio: Unus non sufficit orbis xiii

Nota bene xxiii

Prolegomenon: Concepts from Logic 1

...In principio... 1

Subset 2

Conditional Statements and Variations 3

Mathematical Truth 6

Necessity and Sufficiency 11

Complements 14

Neither More Nor Less 17

PART I: Exordium 21

1 Praeludium: Ordered Sets 23

Mappings 23

Equivalence Relations 28

Partially Ordered Sets 31

Totally Ordered Sets 37

2 Principium: The Lattice of Equivalence Relations 39

Lattices 39

The Lattice X4

46

Page 8

Mappings and Equivalence Relations 50

Linkage 54

Representation Theorems 59

3 Continuatio: Further Lattice Theory 61

Modularity 61

Distributivity 63

Complementarity 64

Equivalence Relations and Products 68

Covers and Diagrams 70

Semimodularity 74

Chain Conditions 75

PART II: Systems, Models, and Entailment 81

4 The Modelling Relation 83

Dualism 83

Natural Law 88

Model versus Simulation 91

The Prototypical Modelling Relation 95

The General Modelling Relation 100

5 Causation 105

Aristotelian Science 105

Aristotle’s Four Causes 109

Connections in Diagrams 114

In beata spe 127

6 Topology 131

Network Topology 131

Traversability of Relational Diagrams 138

The Topology of Functional Entailment Paths 142

Algebraic Topology 150

Closure to Efficient Causation 156

Page 9

PART III: Simplex and Complex 161

7 The Category of Formal Systems 163

Categorical System Theory 163

Constructions in S 167

Hierarchy of S-Morphisms and Image Factorization 173

The Lattice of Component Models 176

The Category of Models 183

The $ and the : 187

Analytic Models and Synthetic Models 189

The Amphibology of Analysis and Synthesis 194

8 Simple Systems 201

Simulability 201

Impredicativity 206

Limitations of Entailment and Simulability 209

The Largest Model 212

Minimal Models 214

Sum of the Parts 215

The Art of Encoding 217

The Limitations of Entailment in Simple Systems 221

9 Complex Systems 229

Dichotomy 229

Relational Biology 233

PART IV: Hypotheses fingo 237

10 Anticipation 239

Anticipatory Systems 239

Causality 245

Teleology 248

Synthesis 250

Lessons from Biology 255

An Anticipatory System is Complex 256

Page 10

11 Living Systems 259

A Living System is Complex 259

(M,R)-Systems 262

Interlude: Reflexivity 272

Traversability of an (M,R)-System 278

What is Life? 281

The New Taxonomy 284

12 Synthesis of (M,R)-Systems 289

Alternate Encodings of the Replication Component 289

Replication as a Conjugate Isomorphism 291

Replication as a Similarity Class 299

Traversability 303

PART V: Epilogus 309

13 Ontogenic Vignettes 311

(M,R)-Networks 311

Anticipation in (M,R)-Systems 318

Semiconservative Replication 320

The Ontogenesis of (M,R)-Systems 324

Appendix: Category Theory 329

Categories o Functors o Natural Transformations 330

Universality 348

Morphisms and Their Hierarchies 360

Adjoints 364

Bibliography 373

Acknowledgments 377

Index 379

Page 11

xiii

Praefatio

Unus non sufficit orbis

In my mentor Robert Rosen’s iconoclastic masterwork Life Itself [1991], which dealt with the epistemology of life, he proposed a Volume 2 that was supposed to deal with the ontogeny of life. As early as 1990, before Life Itself (i.e., ‘Volume 1’) was even published (he had just then signed a contract with Columbia University Press), he mentioned to me in our regular correspondence that Volume 2 was “about half done”. Later, in his 1993 Christmas letter to me, he wrote:

...I’ve been planning a companion volume [to Life Itself] dealing with ontology. Well, that has seeped into every aspect of everything else, and I think I’m about to make a big dent in a lot of old problems. Incidentally, that book [Life Itself] has provoked a very large response, and I’ve been hearing from a lot of people, biologists and others, who have been much dissatisfied with prevailing dogmas, but had no language to articulate their discontents. On the other hand, I’ve outraged the “establishment”. The actual situation reminds me of when I used to travel in

Eastern Europe in the old days, when everyone was officially a Dialectical Materialist, but unofficially, behind closed doors, nobody was a Dialectical Materialist.

http://www.ontoslink.com/index.php?page=shop.getfile&file_id=432&product_id=288&option=com_virtuemart&Itemid=79&lang=en

Cheating the Millennium: The Mounting Explanatory Debts of Scientific Naturalism

2003 Christopher Michael Langan

1. Introduction: Thesis + Antithesis = Synthesis

2. Two Theories of Biological Causality

3. Causality According to Intelligent Design Theory

4. Causality According to Neo-Darwinism

5. A Deeper Look at Causality: The Connectivity Problem

6. The Dualism Problem

7. The Structure Problem

8. The Containment Problem

9. The Utility (Selection) Problem

10. The Stratification Problem

11. Synthesis: Some Essential Features of a Unifying Model of Nature and Causality

...

The Utility (Selection) Problem

As we have just noted, deterministic causality transforms the states of preexisting objects according to preexisting laws associated with an external medium. Where this involves or produces feedback, the feedback is of the conventional cybernetic variety; it transports information through the medium from one location to another and then back again, with transformations at each end of the loop. But where objects, laws and media do not yet exist, this kind of feedback is not yet possible. Accordingly, causality must be reformulated so that it can not only transform the states of natural systems, but account for self-deterministic relationships between states and laws of nature. In short, causality must become metacausality.([35]Metacausality is the causal principle or agency responsible for the origin or “causation” of causality itself (in conjunction with state). This makes it responsible for its own origin as well, ultimately demanding that it self-actualize from an ontological groundstate consisting of unbound ontic potential.)

Self-determination involves a generalized atemporal([36]Where time is defined on physical change, metacausal processes that affect potentials without causing actual physical changes are by definition atemporal.) kind of feedback between physical states and the abstract laws that govern them. Whereas ordinary cybernetic feedback consists of information passed back and forth among controllers and regulated entities through a preexisting conductive or transmissive medium according to ambient sensory and actuative protocols – one may think of the Internet, with its closed informational loops and preexisting material processing nodes and communication channels, as a ready example - self-generative feedback must be ontological and telic rather than strictly physical in character.([37]Telesis is a convergent metacausal generalization of law and state, where law relates to state roughly as the syntax of a language relates to its expressions through generative grammar…but with the additional stipulation that as a part of syntax, generative grammar must in this case generate itself along with state. Feedback between syntax and state may thus be called telic feedback.) That is, it must be defined in such a way as to “metatemporally” bring the formal structure of cybernetics and its physical content into joint existence from a primitive, undifferentiated ontological groundstate. To pursue our example, the Internet, beginning as a timeless self-potential, would have to self-actualize, in the process generating time and causality.

But what is this ontological groundstate, and what is a “self-potential”? For that matter, what are the means and goal of cosmic self-actualization? The ontological groundstate may be somewhat simplistically characterized as a complete abeyance of binding ontological constraint, a sea of pure telic potential or “unbound telesis”. Self-potential can then be seen as a telic relationship of two lower kinds of potential: potential states, the possible sets of definitive properties possessed by an entity along with their possible values, and potential laws (nomological syntax) according to which states are defined, recognized and transformed.([38]Beyond a certain level of specificity, no detailed knowledge of state or law is required in order to undertake a generic logical analysis of telesis.) Thus, the ontological groundstate can for most purposes be equated with all possible state-syntax relationships or “self-potentials”, and the means of self-actualization is simply a telic, metacausal mode of recursion through which telic potentials are refined into specific state-syntax configurations. The particulars of this process depend on the specific model universe – and in light of dual-aspect monism, the real self-modeling universe - in which the telic potential is actualized.

And now we come to what might be seen as the pivotal question: what is the goal of self-actualization?

Conveniently enough, this question contains its own answer: self-actualization, a generic analogue of Aristotelian final causation and thus of teleology, is its own inevitable outcome and thus its own goal.([39]To achieve causal closure with respect to final causation, a metacausal agency must self-configure in such a way that it relates to itself as the ultimate utility, making it the agency, act and product of its own self-configuration. This 3-way coincidence, called triality, follows from self-containment and implies that self-configuration is intrinsically utile, thus explaining its occurrence in terms of intrinsic utility.) Whatever its specific details may be, they are actualized by the universe alone, and this means that they are mere special instances of cosmic self-actualization. Although the word “goal” has subjective connotations – for example, some definitions stipulate that a goal must be the object of an instinctual drive or other subjective impulse – we could easily adopt a reductive or functionalist approach to such terms, taking them to reduce or refer to objective features of reality. Similarly, if the term “goal” implies some measure of design or pre-formulation, then we could easily observe that natural selection does so as well, for nature has already largely determined what “designs” it will accept for survival and thereby render fit.

Given that the self-containment of nature implies causal closure implies self-determinism implies self-actualization, how is self-actualization to be achieved? Obviously, nature must select some possible form in which to self-actualize. Since a self-contained, causally closed universe does not have the luxury of external guidance, it needs to generate an intrinsic self-selection criterion in order to do this. Since utility is the name already given to the attribute which is maximized by any rational choice function, and since a totally self-actualizing system has the privilege of defining its own standard of rationality([40]It might be objected that the term “rationality” has no place in the discussion…that there is no reason to assume that the universe has sufficient self-recognitional coherence or “consciousness” to be “rational”. However, since the universe does indeed manage to consistently self-recognize and self-actualize in a certain objective sense, and these processes are to some extent functionally analogous to human self-recognition and self-actualization, we can in this sense and to this extent justify the use of terms like “consciousness” and “rationality” to describe them. This is very much in the spirit of such doctrines as physical reductionism, functionalism and eliminativism, which assert that such terms devolve or refer to objective physical or functional relationships. Much the same reasoning applies to the term utility.), we may as well speak of this self-selection criterion in terms of global or generic self-utility. That is, the self-actualizing universe must generate and retrieve information on the intrinsic utility content of various possible forms that it might take.

The utility concept bears more inspection than it ordinarily gets. Utility often entails a subject-object distinction; for example, the utility of an apple in a pantry is biologically and psychologically generated by a more or less conscious subject of whom its existence is ostensibly independent, and it thus makes little sense to speak of its “intrinsic utility”. While it might be asserted that an apple or some other relatively non-conscious material object is “good for its own sake” and thus in possession of intrinsic utility, attributing self-interest to something implies that it is a subject as well as an object, and thus that it is capable of subjective self-recognition.([41]In computation theory, recognition denotes the acceptance of a language by a transducer according to its programming or “transductive syntax”. Because the universe is a self-accepting transducer, this concept has physical bearing and implications.) To the extent that the universe is at once an object of selection and a self-selective subject capable of some degree of self-recognition, it supports intrinsic utility (as does any coherent state-syntax relationship). An apple, on the other hand, does not seem at first glance to meet this criterion.

But a closer look again turns out to be warranted. Since an apple is a part of the universe and therefore embodies its intrinsic self-utility, and since the various causes of the apple (material, efficient and so on) can be traced back along their causal chains to the intrinsic causation and utility of the universe, the apple has a certain amount of intrinsic utility after all. This is confirmed when we consider that its taste and nutritional value, wherein reside its utility for the person who eats it, further its genetic utility by encouraging its widespread cultivation and dissemination. In fact, this line of reasoning can be extended beyond the biological realm to the world of inert objects, for in a sense, they too are naturally selected for existence. Potentials that obey the laws of nature are permitted to exist in nature and are thereby rendered “fit”, while potentials that do not are excluded.([42]The concept of potential is an essential ingredient of physical reasoning. Where a potential is a set of possibilities from which something is actualized, potential is necessary to explain the existence of anything in particular (as opposed to some other partially equivalent possibility).) So it seems that in principle, natural selection determines the survival of not just actualities but potentials, and in either case it does so according to an intrinsic utility criterion ultimately based on global self-utility.

It is important to be clear on the relationship between utility and causality. Utility is simply a generic selection criterion essential to the only cosmologically acceptable form of causality, namely self-determinism. The subjective gratification associated with positive utility in the biological and psychological realms is ultimately beside the point. No longer need natural processes be explained under suspicion of anthropomorphism; causal explanations need no longer implicitly refer to instinctive drives and subjective motivations. Instead, they can refer directly to a generic objective “drive”, namely intrinsic causality…the “drive” of the universe to maximize an intrinsic self-selection criterion over various relational strata within the bounds of its internal constraints.([43]Possible constraints include locality, uncertainty, blockage, noise, interference, undecidability and other intrinsic features of the natural world.) Teleology and scientific naturalism are equally satisfied; the global self-selection imperative to which causality necessarily devolves is a generic property of nature to which subjective drives and motivations necessarily “reduce”, for it distributes by embedment over the intrinsic utility of every natural system.

Intrinsic utility and natural selection relate to each other as both reason and outcome. When an evolutionary biologist extols the elegance or effectiveness of a given biological “design” with respect to a given function, as in “the wings of a bird are beautifully designed for flight”, he is really talking about intrinsic utility, with which biological fitness is thus entirely synonymous. Survival and its requisites have intrinsic utility for that which survives, be it an organism or a species; that which survives derives utility from its environment in order to survive and as a result of its survival. It follows that neo-Darwinism, a theory of biological causation whose proponents have tried to restrict it to determinism and randomness, is properly a theory of intrinsic utility and thus of self-determinism. Athough neo-Darwinists claim that the kind of utility driving natural selection is non-teleological and unique to the particular independent systems being naturally selected, this claim is logically insupportable. Causality ultimately boils down to the tautological fact that on all possible scales, nature is both that which selects and that which is selected, and this means that natural selection is ultimately based on the intrinsic utility of nature at large.

The Stratification Problem

It is frequently taken for granted that neo-Darwinism and ID theory are mutually incompatible, and that if one is true, then the other must be false. But while this assessment may be accurate with regard to certain inessential propositions attached to the core theories like pork-barrel riders on congressional bills([44]Examples include the atheism and materialism riders often attached to neo-Darwinism, and the Biblical Creationism rider often mistakenly attached to ID theory.), it is not so obvious with regard to the core theories themselves. In fact, these theories are dealing with different levels of causality."

http://www.megafoundation.org/CTMU/Articles/Cheating_the_Millennium-final.pdf

"[45] Cognitive-perceptual syntax consists of (1) sets, posets or tosets of attributes (telons), (2) perceptual rules of external attribution for mapping external relationships into telons, (3) cognitive rules of internal attribution for cognitive (internal, non-perceptual) state-transition, and (4) laws of dependency and conjugacy according to which perceptual or cognitive rules of external or internal attribution may or may not act in a particular order or in simultaneity."

http://www.megafoundation.org/CTMU/Articles/Langan_CTMU_092902.pdf

"Measurement is aimed at assigning a value to “a quantity of a thing”. Therefore a clear statement of what a quantity is appears to be a required condition to interpret unambiguously the results of a measurement. However, the concept of quantity is seldom analyzed in detail in even the foundational works of metrology, and far too often, quantities are “defined” in terms of attributes, characteristics, qualities, etc. while leaving such terms in themselves undefined. The aim of this paper is to discuss the meaning of “quantity” (or, as it will be adopted here for the sake of generality, “attribute”) as it is used in measurement, also drawing several conclusions on the concept of measurement itself.

Author Keywords: Measurement theory; Measurement science; Concept of quantity"

http://cat.inist.fr/?aModele=afficheN&cpsidt=3194846

COLLOQUIUM LECTURES ON GEOMETRIC MEASURE THEORY

http://weber.math.washington.edu/~morrow/334_09/geoMeasure.pdf

"The fundamental mechanism of Operator Grammar is the dependency constraint: certain words (operators) require that one or more words (arguments) be present in an utterance. In the sentence John wears boots, the operator wears requires the presence of two arguments, such as John and boots. (This definition of dependency differs from other dependency grammars in which the arguments are said to depend on the operators.)

In each language the dependency relation among words gives rise to syntactic categories in which the allowable arguments of an operator are defined in terms of their dependency requirements. Class N contains words (e.g. John, boots) that do not require the presence of other words. Class ON contains the words (e.g. sleeps) that require exactly one word of type N. Class ONN contains the words (e.g. wears) that require two words of type N. Class OOO contains the words (e.g. because) that require two words of type O, as in John stumbles because John wears boots. Other classes include OO (is possible), ONNN (put), OON (with, surprise), ONO (know), ONNO (ask) and ONOO (attribute).

The categories in Operator Grammar are universal and are defined purely in terms of how words relate to other words, and do not rely on an external set of categories such as noun, verb, adjective, adverb, preposition, conjunction, etc. The dependency properties of each word are observable through usage and therefore learnable."

http://en.wikipedia.org/wiki/Operator_Grammar

"As an example of the tautological nature of MAP, consider a hypothetical external scale of distance or duration in terms of which the absolute size or duration of the universe or its contents can be defined. Due to the analytic self-containment of reality, the functions and definitions comprising its self-descriptive manifold refer only to each other; anything not implicated in its syntactic network is irrelevant to structure and internally unrecognizable, while anything which is relevant is already an implicit ingredient of the network and need not be imported from outside. This implies that if the proposed scale is relevant, then it is not really external to reality; in fact, reality already contains it as an implication of its intrinsic structure.

In other words, because reality is defined on the mutual relevance of its essential parts and aspects, external and irrelevant are synonymous; if something is external to reality, then it is not included in the syntax of reality and is thus internally unrecognizable. It follows that with respect to that level of reality defined on relevance and recognition, there is no such thing as a “real but external” scale, and thus that the universe is externally undefined with respect to all measures including overall size and duration. If an absolute scale were ever to be internally recognizable as an ontological necessity, then this would simply imply the existence of a deeper level of reality to which the scale is intrinsic and by which it is itself intrinsically explained as a relative function of other ingredients. Thus, if the need for an absolute scale were ever to become recognizable within reality – that is, recognizable to reality itself - it would by definition be relative in the sense that it could be defined and explained in terms of other ingredients of reality. In this sense, MAP is a “general principle of relativity”.

...

The Principle of Attributive (Topological-Descriptive, State-Syntax) Duality

Where points belong to sets and lines are relations between points, a form of duality also holds between sets and relations or attributes, and thus between set theory and logic. Where sets contain their elements and attributes distributively describe their arguments, this implies a dual relationship between topological containment and descriptive attribution as modeled through Venn diagrams. Essentially, any containment relationship can be interpreted in two ways: in terms of position with respect to bounding lines or surfaces or hypersurfaces, as in point set topology and its geometric refinements (⊃T), or in terms of descriptive distribution relationships, as in the Venn-diagrammatic grammar of logical substitution (⊃D).

...

Because states express topologically while the syntactic structures of their underlying operators express descriptively, attributive duality is sometimes called state-syntax duality. As information requires syntactic organization, it amounts to a valuation of cognitive/perceptual syntax; conversely, recognition consists of a subtractive restriction of informational potential through an additive acquisition of information. TD duality thus relates information to the informational potential bounded by syntax, and perception (cognitive state acquisition) to cognition.

In a Venn diagram, the contents of circles reflect the structure of their boundaries; the boundaries are the primary descriptors. The interior of a circle is simply an “interiorization” or self-distribution of its syntactic “boundary constraint”. Thus, nested circles corresponding to identical objects display a descriptive form of containment corresponding to syntactic layering, with underlying levels corresponding to syntactic coverings.

This leads to a related form of duality, constructive-filtrative duality.

...

"However, this ploy does not always work. Due to the longstanding scientific trend toward physical reductionism, the buck often gets passed to physics, and because physics is widely considered more fundamental than any other scientific discipline, it has a hard time deferring explanatory debts mailed directly to its address. Some of the explanatory debts for which physics is holding the bag are labeled “causality”, and some of these bags were sent to the physics department from the evolutionary biology department. These debt-filled bags were sent because the evolutionary biology department lacked the explanatory resources to pay them for itself. Unfortunately, physics can’t pay them either.

The reason that physics cannot pay explanatory debts generated by various causal hypotheses is that it does not itself possess an adequate understanding of causality. This is evident from the fact that in physics, events are assumed to be either deterministic or nondeterministic in origin. Given an object, event, set or process, it is usually assumed to have come about in one of just two possible ways: either it was brought about by something prior and external to it, or it sprang forth spontaneously as if by magic. The prevalence of this dichotomy, determinacy versus randomness, amounts to an unspoken scientific axiom asserting that everything in the universe is ultimately either a function of causes external to the determined entity (up to and including the universe itself), or no function of anything whatsoever. In the former case there is a known or unknown explanation, albeit external; in the latter case, there is no explanation at all. In neither case can the universe be regarded as causally self-contained.

To a person unused to questioning this dichotomy, there may seem to be no middle ground. It may indeed seem that where events are not actively and connectively produced according to laws of nature, there is nothing to connect them, and thus that their distribution can only be random, patternless and meaningless. But there is another possibility after all: self-determinacy. Self-determinacy involves a higher-order generative process that yields not only the physical states of entities, but the entities themselves, the abstract laws that govern them, and the entire system which contains and coherently relates them. Self-determinism is the causal dynamic of any system that generates its own components and properties independently of prior laws or external structures. Because self-determinacy involves nothing of a preexisting or external nature, it is the only type of causal relationship suitable for a causally self-contained system.

In a self-deterministic system, causal regression leads to a completely intrinsic self-generative process. In any system that is not ultimately self-deterministic, including any system that is either random or deterministic in the standard extrinsic sense, causal regression terminates at null causality or does not terminate. In either of the latter two cases, science can fully explain nothing; in the absence of a final cause, even material and efficient causes are subject to causal regression toward ever more basic (prior and embedding) substances and processes, or if random in origin, toward primitive acausality. So given that explanation is largely what science is all about, science would seem to have no choice but to treat the universe as a self-deterministic, causally self-contained system. ([34]In any case, the self-containment of the real universe is implied by the following contradiction: if there were any external entity or influence that were sufficiently real to affect the real universe, then by virtue of its reality, it would by definition be internal to the real universe.)

And thus do questions about evolution become questions about the self-generation of causally self-contained, self-emergent systems. In particular, how and why does such a system self-generate?

http://www.megafoundation.org/CTMU/Articles/Langan_CTMU_092902.pdf

Complex Systems from the Perspective of Category Theory: II. Covering Systems and Sheaves

"Motivated by foundational studies concerning the modelling and analysis of complex systems we propose a scheme based on category theoretical methods and concepts [1-7]. The essence of the scheme is the development of a coherent relativistic perspective in the analysis of information structures associated with the behavior of complex systems, effected by families of partial or local information carriers. It is claimed that the appropriate specification of these families, as being capable of encoding the totality of the content, engulfed in an information structure, in a preserving fashion, necessitates the introduction of compatible families, constituting proper covering systems of information structures. In this case the partial or local coefficients instantiated by contextual information carriers may be glued together forming a coherent sheaf theoretical structure [8-10], that can be made isomorphic with the original operationally or theoretically introduced information structure. Most importantly, this philosophical stance is formalized categorically, as an instance of the adjunction concept. In the same mode of thinking, the latter may be used as a formal tool for the expression of an invariant property, underlying the noetic picturing of an information structure attached formally with a complex system as a manifold. The conceptual grounding of the scheme is interwoven with the interpretation of the adjunctive correspondence between variable sets of information carriers and information structures, in terms of a communicative process of encoding and decoding."

http://philsci-archive.pitt.edu/1237/1/axiomath2.pdf

Concurrent ontology and the extensional conception of attribute

"By analogy with the extension of a type as the set of individuals of that type, we define the extension of an attribute as the set of states of an idealized observer of that attribute, observing concurrently with observers of other attributes. The attribute-theoretic counterpart of an operation mapping individuals of one type to individuals of another is a dependency mapping states of one attribute to states of another. We integrate attributes with types via a symmetric but not self-dual framework of dipolar algebras or disheaves amounting to a type-theoretic notion of Chu space over a family of sets of qualia doubly indexed by type and attribute, for example the set of possible colors of a ball or heights of buildings. We extend the sheaf-theoretic basis for type theory to a notion of disheaf on a profunctor. Applications for this framework include the Web Ontology Language OWL, UML, relational databases, medical information systems, geographic databases, encyclopedias, and other data-intensive areas standing to benefit from a precise ontological framework coherently accommodating types and attributes.

Keywords: Attribute, Chu space, ontology, presheaf, type."

http://conconto.stanford.edu/conconto.pdf

"Now going back to our subject and the facts upheld by materialists. They state that inasmuch as it is proven and upheld by science that the life of phenomena depends upon composition and their destruction upon disintegration, then where comes in the need or necessity of a Creator -- the self-subsistent Lord?

For if we see with our own eyes that these infinite beings go through myriads of compositions and in every composition appearing under a certain form showing certain characteristics virtues, then we are independent of any divine maker.

597. This is the argument of the materialists. On the other hand those who are informed of divine philosophy answer in the following terms:

Composition is of three kinds.

1. Accidental composition.

2. Involuntary composition.

3. Voluntary composition.

There is no fourth kind of composition. Composition is restricted to these three categories.

If we say that composition is accidental, this is philosophically a false theory, because then we have to believe in an effect without a cause, and philosophically, no effect is conceivable without a cause. We cannot think of an effect without some primal cause, and composition being an effect, there must naturally be a cause behind it.

...

738. Consequently, the great divine philosophers have had the following epigram: All things are involved in all things. For every single phenomenon has enjoyed the postulates of God, and in every form of these infinite electrons it has had its characteristics of perfection.

Thus this flower once upon a time was of the soil. The animal eats the flower or its fruit, and it thereby ascends to the animal kingdom. Man eats the meat of the animal, and there you have its ascent into the human kingdom, because all phenomena are divided into that which eats and that which is eaten. Therefore, every primordial atom of these atoms, singly and indivisibly, has had its coursings throughout all the sentient creation, going constantly into the aggregation of the various elements. Hence do you have the conservation of energy and the infinity of phenomena, the indestructibility of phenomena, changeless and immutable, because life cannot suffer annihilation but only change.

The apparent annihilation is this: that the form, the outward image, goes through all these changes and transformations. Let us again take the example of this flower. The flower is indestructible. The only thing that we can see, this outer form, is indeed destroyed, but the elements, the indivisible elements which have gone into the composition of this flower are eternal and changeless. Therefore the realities of all phenomena are immutable. Extinction or mortality is nothing but the transformation of pictures and images, so to speak -- the reality back of these images is eternal. And every reality of the realities is one of the bounties of God.

Some people believe that the divinity of God had a beginning.

They say that before this particular beginning man had no knowledge of the divinity of God. With this principle they have limited the operation of the influences of God.

For example, they think there was a time when man did not exist, and that there will be a time in the future when man will not exist. Such a theory circumscribes the power of God, because how can we understand the divinity of God except through scientifically understanding the manifestations of the attributes of God?

How can we understand the nature of fire except from its heat, its light? Were not heat and light in this fire, naturally we could not say that the fire existed.

Thus, if there was a time when God did not manifest His qualities, then there was no God, because the attributes of God presuppose the creation of phenomena. For example, by present consideration we say that God is the creator. Then there must always have been a creation -- since the quality of creator cannot be limited to the moment when some man or men realize this attribute. The attributes that we discover one by one -- these attributes themselves necessarily anticipated our discovery of them. Therefore, God has no beginning and no ending; nor is His creation limited ever as to degree. Limitations of time and degree pertain to the forms of things, not to their realities. The effulgence of God cannot be suspended. The sovereignty of God cannot be interrupted.

As long as the sovereignty of God is immemorial, therefore the creation of our world throughout infinity is presupposed. When we look at the reality of this subject, we see that the bounties of God are infinite, without beginning and without end."

http://bahai-library.com/compilations/bahai.scriptures/7.html

"In practice, systems incorporating reactive planning tend to be autonomous systems proactively pursuing at least one, and often many, goals. What defines anticipation in an AI model is the explicit existence of an inner model of the environment for the anticipatory system (sometimes including the system itself). For example, if the phrase it will probably rain were computed on line in real time, the system would be seen as anticipatory.

In 1985, Robert Rosen defined an anticipatory system as follows [1]:

A system containing a predictive model of itself and/or its environment, which allows it to change state at an instant in accord with the model's predictions pertaining to a later instant.

In Rosen's work, analysis of the example : "It's raining outside, therefore take the umbrella" does involve a prediction. It involves the prediction that "If it is raining, I will get wet out there unless I have my umbrella". In that sense, even though it is already raining outside, the decision to take an umbrella is not a purely reactive thing. It involves the use of predictive models which tell us what will happen if we don't take the umbrella, when it is already raining outside.

To some extent, Rosen's definition of anticipation applies to any system incorporating machine learning. At issue is how much of a system's behaviour should or indeed can be determined by reasoning over dedicated representations, how much by on-line planning, and how much must be provided by the system's designers."

http://en.wikipedia.org/wiki/Anticipation_(artificial_intelligence)

"In order to take advantage of model-based behavior it is necessary to be able to properly describe the surroundings in terms of how they are perceived. Such description processes are inductive and not recursively describable. That a system can perceive and describe its own surroundings means further that it has a learning capability. Learning is the process of making order out of disorder and this is precisely the most distinguish quality of inductive inference. Genuine learning without inductive capability is impossible. The implication of this is that systems that have a model of the surroundings are not possible to implement on computers nor can computers be learning devices contrary to what is believed in the area of machine learning."

http://www.cs.lth.se/home/Bertil_Ekdahl/publications/AIandL.pdf

"Deduction. Apply a general principle to infer some fact.

Induction. Assume a general principle that explains many facts.

Abduction. Guess a new fact that implies some given fact.

...

Ibn Taymiyya admitted that deduction in mathematics is certain. But in any empirical subject, universal propositions can only be derived by induction, and induction must be guided by the same principles of evidence and relevance used in analogy. Figure 3 illustrates his argument: Deduction proceeds from a theory containing universal propositions. But those propositions must have earlier been derived by induction with the same criteria used for analogy. The only difference is that induction produces a theory as intermediate result, which is then used in a subsequent process of deduction. By using analogy directly, legal reasoning dispenses with the intermediate theory and goes straight from cases to conclusion. If the theory and the analogy are based on the same evidence, they must lead to the same conclusions.

...

In analogical reasoning, the question Q leads to the same schematic anticipation, but instead of triggering the if-then rules of some theory, the unknown aspects of Q lead to the cases from which a theory could have been derived. The case that gives the best match to the given case P may be assumed as the best source of evidence for estimating the unknown aspects of Q; the other cases show possible alternatives. For each new case P′, the same principles of evidence, relevance, and significance must be used. The same kinds of operations used in induction and deduction are used to relate the question Q to some corresponding part Q′ of the case P′. The closer the agreement among the alternatives for Q, the stronger the evidence for the conclusion. In effect, the process of induction creates a one-size-fits-all theory, which can be used to solve many related problems by deduction. Case-based reasoning, however, is a method of bespoke tailoring for each problem, yet the operations of stitching propositions are the same for both.

Creating a new theory that covers multiple cases typically requires new categories in the type hierarchy. To characterize the effects of analogies and metaphors, Way (1991) proposed dynamic type hierarchies, in which two or more analogous cases are generalized to a more abstract type T that subsumes all of them. The new type T also subsumes other possibilities that may combine aspects of the original cases in novel arrangements. Sowa (2000) embedded the hierarchies in an infinite lattice of all possible theories. Some of the theories are highly specialized descriptions of just a single case, and others are very general. The most general theory at the top of the lattice contains only tautologies, which are true of everything. At the bottom is the contradictory or absurd theory, which is true of nothing. The theories are related by four operators: analogy and the belief revision operators of contraction, expansion, and revision (Alchourrón et al. 1985). These four operators define pathways through the lattice (Figure 4), which determine all possible ways of deriving new theories from old ones."

http://www.jfsowa.com/pubs/cogcat.htm

"Landauer and Bellman define semiotics as “the study of the appearance (visual or otherwise), meaning, and use of symbols and symbol systems.” From their examination of classification by biological systems, they conclude that it would require a radical shift in how symbols are represented in computers to emulate the biological classification process in hardware. However, they argue that semiotic theory should provide the theoretical basis for just such a radical shift. Landauer and Bellman do not claim to have discovered the Unifying semiotic principle of pattern-recognition, but they suggest that it must be inductive in character.

Indeed, the development of a unified inductive-learning model is the key to artificial intelligence. Induction is defined as a mode of reasoning that increases the information content of a given body of data. The application to pattern-recognition in general is obvious. An inductive pattern recognize would learn the common characterizing attributes of all (possibly infinitely many) members of a class from observation of a finite (preferably small) set of samples from the class and a finite set of samples not from the class. The problem arises due to the fact that the commonly used “learning” paradigms (neural nets, nearest neighbor algorithms, etc.) are based on identifying boundaries between classes, and are incapable of inductive learning.

How then should induction be performed? The leading thinkers in machine intelligence believe it should somehow emulate the process used in biological systems. That process appears to be model-based. Rosen provides an explanation for anticipatory behavior of biological systems in terms of interacting models.

Rosen shows that traditional reductionist modeling does not provide simple explanations for complex behavior. What seems to be complex behavior in such models is in fact an artifact of extrapolating the model outside its effective range. Genuine complex behavior must be described by anticipatory modeling. In Rosen’s words: “In particular, complex systems may contain sub-systems which act as predictive models of themselves and/or their environments, whose predictions regarding fhture behaviors can be utilized for modulation of present change of state. Systems of this type act in a truly anticipatory fashion, and possess many novel properties whose properties have never been explored.” In other words, genuine complexity is characterized by anticipation.

What is the best way to obtain the models required for an AS? The simple answer is to observe reality to a finite extent and then to generalize from the observations. To do so is inherently to add information to the data, or to perform an induction. It requires the generation of a likely principle based on incomplete information, and the principle may later be improved in the light of increasing knowledge. Where several possible models might achieve a desired goal, the best choice is driven by the relative economy of different models in reaching the goal.

4. BAYESIAN PARAMETER ESTIMATION

How migh:;his be done in practice with noisy data? The most powerful method is Bayesian parameter estimation. Bayesian drops irrelevant parameters without loss of precision in describing relevant parameters. It filly exploits prior knowledge. Most important, the computation of the most probable values of a parameter set incidentally includes the measure of the probability. That is, the calculation produces an estimate of its own goodness. By comparing the goodness of alternative models, the best available description of the underlying reality is obtained. This is the optimal method of obtaining a model from experimental dat~ or of predicting the occurrence of future events given knowledge from the past, and of improving the prediction of the future as knowledge of the past improves. Bayesian parameter estimation is a straightforward method of induction."

http://www.osti.gov/bridge/servlets/purl/3466-JywdqC/webviewable/3466.pdf

There are many interesting ways to use generalized manifolds.

"Briefly, in this scheme teleomatic systems are classified as end-resulting, teleonomic as end-directed, and teleological are end-seeking."

http://www.springerlink.com/content/n065p12442750447/fulltext.pdf

"The teleonomic logic of evolution dictates that if animals with a more accurate representation of their environment have a better chance of survival, then over time they will develop mental models that are congruent with the laws of physics. The world around us consists mainly of what are described mathematically as differentiable manifolds; these include the curves, surfaces and solids that form the substrate for equations involving space, time, frequency, mass and force. A computational manifold is an abstract computing element that can exactly model the physical quantity it describes. A computational map is a computational manifold together with a parameterization, a discretization and an encoding. These completely specify the mesh of numerical values that represent the manifold and permit its realization within a digital computer or a biological nervous system. Just as the current density in Maxwell’s equations or the mass density in the Navier-Stokes equation define derivatives over large numbers of discrete electrons and molecules, a computational manifold can define a continuum over a computational map composed of discrete living cells. The fidelity of the representation is dependent not just on the resolution, but also on how each computing element behaves. In particular, since the physical phenomena may contain discontinuities, the encoding must be amenable to the calculation of Lebesgue integrals.

...

Audio spectrograms, which can be viewed as images in a two-dimensional product space of frequency and time, are the principal components in a language simulacra. Associating sounds with other manifolds, and those manifolds with other sounds, under the control of a continuous formal system, provides a framework for language and the communication of ideas. Areas of the neocortex can be modeled as associative memories that accept images as input addresses and produce images as outputs. Language and geometric reasoning simulacra comprising networks of CAMMs and projections from sensory-motor manifolds form the basis of conscious reflection. The mathematics that describes these surfaces and volumes of computation, the functional relationships between them, and the computations they perform provide a unified theory that can be used to model natural intelligence."

http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.161.4245

I came across the idea of varifolds a while back, interesting how they can be used in machine learning, managing complexity or modeling...I was thinking it may be useful in bridging analog vs. digital approaches.

Learning on Varifolds:

"Popular manifold learning algorithms (e.g., [2, 3, 4]) typically assume that high dimensional data lie on a lower dimensional manifold. This is a reasonable assumption since many data are generated by physical processes having a few free parameters. Tangent spaces for manifolds are the generalization of tangent planes for surfaces, and are used for instance in [5] for machine learning by locally fitting the tangent space of each data point and extracting local geometric information. One problem with tangent spaces is that they may not exist for non-differentiable manifolds, and since data commonly do not come continuously, we do not have enough reason to believe that we can always achieve reliable tangent space estimation. Therefore, in this paper, we argue to use varifolds (see next section for a definition), which accommodate the discrete nature of data, instead of differentiable manifolds as the underlying models of data and present an algorithm based on hypergraphs that facilitate the computation required for typical machine learning applications. We organize the paper as follows: we review the basic concept of manifolds and varifolds in Section 2, and introduce hypergraphs in Section 3, followed by our main varifold learning algorithm in Section 4. We give experimental validations on toy and real data in Section 5 and finally conclude the paper with future research questions."

http://www.cse.ohio-state.edu/~dinglei/ding_mlsp08.pdf

Minimal Surfaces, Stratified Multivarifolds, and the Plateau Problem:

"Soap films, or, more generally, interfaces between physical media in equilibrium, arise in many applied problems in chemistry, physics, and also in nature. In applications, one finds not only two-dimensional but also multidimensional minimal surfaces that span fixed closed "contours" in some multidimensional Riemannian space. An exact mathematical statement of the problem of finding a surface of least area or volume requires the formulation of definitions of such fundamental concepts as a surface, its boundary, minimality of a surface, and so on. It turns out that there are several natural definitions of these concepts, which permit the study of minimal surfaces by different, and complementary, methods."

...

Dao Trong Thi formulated the new concept of a multivarifold, which is the functional analog of a geometrical stratified surface and enables us to solve Plateau's problem in a homotopy class."

http://books.google.com/books?id=mncIV2c5Z4sC&source=gbs_navlinks_s

"Perhaps less colorfully, Cleland (1993) argues the related point that computational devices limited to discrete numbers (i.e. Turing machines) can not compute many physically realized functions. Presumably this limitation is avoided by analog computers and brains.

Further arguments for cognitive continuity arise from a different sort of computational consideration. Consider a simple soap bubble, whose behavior can be used to compute extremely complex force-resolving functions (see Uhr, 1994). The interactions of molecular forces which can be used to represent certain macro-phenomena are far too complex for a digital computer to compute on a reasonable time scale. It is simply a fact that, in certain circumstances, analog computation is more efficient than digital computation. Coupled with arguments for the efficiency of many evolved systems, we should conclude that it is reasonable to expect the brain to be analog.

...

However, analog computation has a far more serious concern in regards to explaining cognitive function. If computation in the brain is fundamentally analog, serious problems arise as to how parts of the brain are able to communicate (Hammerstrom, 1995). It is notoriously difficult to ‘read off’ the results of an analog computation. Analog signals, because of their infinite information content, are extremely difficult to transmit in their entirety. Particularly since brain areas seem somewhat specialized in their computational tasks, there must be a means of sending understandable messages to other parts of the brain. Not only are analog signals difficult to transmit, analog computers have undeniably lower and more variable accuracy than digital computers. This is inconsistent with the empirical evidence for the reproducibility of neuronal responses (Mainen and Sejnowski, 1995).

...

Many who have discussed the continuity debate have arrived at an ecumenical conclusion similar in spirit to the following (Uhr 1994, p. 349):

The brain clearly uses mixtures of analog and digital processes. The flow and fusion of neurotransmitter molecules and of photons into receptors is quantal (digital); depolarization and hyperpolarization of neuron membranes is analog; transmission of pulses is digital; and global interactions mediated by neurotransmitters and slow waves appear to be both analog and digital.

...

In retrospect, Kant seems to have identified both the deeper source of the debate and, implicitly, its resolution. He notes that the tension between continuity and discreteness arises from a parallel tension between the certainty of theory and the uncertainty of how the real world is; in short, between theory and implementation. If we focus on theory, it is not clear that the continuity of the brain can be determined. But, since the brain is a real world system, implementational constraints apply. In particular, the effects of noise on limiting information transfer allow us to quantify the information transmission rates of real neurons. These rates are finite and discretely describable. Thus, a theory informed by implementation has solved our question, at least at one level of description.

Whether the brain is discrete at a higher level of description is still open to debate. Employing the provided definitions, it is possible to again suggest that the brain is discrete with a time step of 10ms as first proposed by Newell. Empirical evidence can then be brought to bear on this question, likely resulting in its being disproved. Similarly, one might propose that the brain is continuous at a similar level of description. Again, empricial evidence can be used to evalute such a claim. However, what has been shown here is that the brain is not continuous ‘all the way down’. There are principled reasons for considering the cognitively relevant aspects of the brain to be discrete at a time step of about one millisecond. In a sense, this does not resolve all questions concerning the analogicity of the brain, but it resolves perhaps the most fundamental one: Can the brain ever be considered digital for explaining cognitive phenomena? The answer, it seems, is “Yes”."

http://watarts.uwaterloo.ca/~celiasmi/Papers/ce.2000.continuity.debate.csq.html

Symbols, neurons, soap-bubbles and the neural computation underlying cognition

A wide range of systems appear to perform computation: what common features do they share? I consider three examples, a digital computer, a neural network and an analogue route finding system based on soap-bubbles. The common feature of these systems is that they have autonomous dynamics — their states will change over time without additional external influence. We can take advantage of these dynamics if we understand them well enough to map a problem we want to solve onto them. Programming consists of arranging the starting state of a system so that the effects of the system''s dynamics on some of its variables corresponds to the effects of the equations which describe the problem to be solved on their variables. The measured dynamics of a system, and hence the computation it may be performing, depend on the variables of the system we choose to attend to. Although we cannot determine which are the appropriate variables to measure in a system whose computation basis is unknown to us I go on to discuss how grammatical classifications of computational tasks and symbolic machine reconstruction techniques may allow us to rule out some measurements of a system from contributing to computation of particular tasks. Finally I suggest that these arguments and techniques imply that symbolic descriptions of the computation underlying cognition should be stochastic and that symbols in these descriptions may not be atomic but may have contents in alternative descriptions.

http://www.springerlink.com/content/hl5701427667q6p7/

"These programs all measure and utilize the P300 wave response in the brain, which was discussed in the previous post. The P300 wave response is an electrical signal that rises in the brain in response to recognition of ‘objects of significance’. The response is uber fast – the 300 in P300 stands for 300 milliseconds. Dr. Larry Farwell is credited as the ‘discoverer’ of the practical applications of this response. His Brainwave Fingerprinting technology uses P300 diagnostics for criminal justice and credibility assessment applications.

The NIA program uses the P300 wave to accelerate Imagery Intelligence (IMINT) analysis. IMINT analysts pour through large amounts of photographic imagery, and scan this imagery for ‘objects of significance’, which can mean Improvised Explosive Devices (IEDs) or other. Usually, the IMINT process is slow and tedious. An analyst has to crawl through piles of data, scanning every bit more or less slowly, virtually in search of needles in a haystack. The NIA program speeds up this process, a lot. An analyst wears an EEG cap and this cap measures the P300 response while the analysts are doing their IMINT tasks. If the analyst sees an ‘object of significance’ then software automatically tags that IMINT bit as relevant, and it is later given further scrutiny. Using this methodology, analysts can course through 10-20 images per second . There is no conscious scanning of the information – the entire process occurs subliminally. Pretty amazing!"

http://www.6gw.org/category/neurotechnology/

"Two competing methodologies in procedural content generation are teleological and ontogenetic. The teleological approach creates an accurate physical model of the environment and the process that creates the thing generated, and then simply runs the simulation, and the results should emerge as they do in nature.

The ontogenetic approach observes the end results of this process and then attempts to directly reproduce those results by ad hoc algorithms. Ontogenetic approaches are more commonly used in real-time applications such as games. (See "Shattering Reality," Game Developer, August 2006.)

A similar (overlapping) distinction is "top down" versus "bottom up": top-down algorithms directly modify/create things to obtain the desired result, and bottom up algorithms create a result that may only have what you want as a side effect (I personally prefer that terminology, it's easier to remember which is which. But they may not refer to exactly the same thing).

This distinction is visible in map generation when you compare your generated map to your desired topology - whether all points can be reached, whether certain specific points (enter? exit? starting point? goal?) are connected to each other, whether there are choke points, etc.

If you're generating a map using Perlin Noise for height, which in turns determines which areas can be crossed (not those above / below a certain height, or with a certain slope), you can't guarantee anything about topology.

But many dungeon and maze generation algorithms directly place rooms and corridors so that they're connected (though some may screw up along the process).

There's a general tradeoff between having good-looking and interesting maps, versus preserving your topological constraints - or more generally, generating something challenging but not completely impossible. You can also loosen up the importance of topological constraints, for example with destructible environments (digging, bombs), or allowing the player to re-generate levels until he finds one he likes."

http://pcg.wikidot.com/pcg-algorithm:teleological-vs-ontogenetic

"The CTMU and Quantum Theory

The microscopic implications of conspansion are in remarkable accord with basic physical criteria. In a self-distributed (perfectly self-similar) universe, every event should mirror the event that creates the universe itself. In terms of an implosive inversion of the standard (Big Bang) model, this means that every event should to some extent mirror the primal event consisting of a condensation of Higgs energy distributing elementary particles and their quantum attributes, including mass and relative velocity, throughout the universe. To borrow from evolutionary biology, spacetime ontogeny recapitulates cosmic phylogeny; every part of the universe should repeat the formative process of the universe itself.

Thus, just as the initial collapse of the quantum wavefunction (QWF) of the causally self-contained universe is internal to the universe, the requantizative occurrence of each subsequent event is topologically internal to that event, and the cause spatially contains the effect. The implications regarding quantum nonlocality are clear. No longer must information propagate at superluminal velocity between spin-correlated particles; instead, the information required for (e.g.) spin conservation is distributed over their joint ancestral IED…the virtual 0-diameter spatiotemporal image of the event that spawned both particles as a correlated ensemble. The internal parallelism of this domain – the fact that neither distance nor duration can bind within it – short-circuits spatiotemporal transmission on a logical level. A kind of “logical superconductor”, the domain offers no resistance across the gap between correlated particles; in fact, the “gap” does not exist! Computations on the domain’s distributive logical relations are as perfectly self-distributed as the relations themselves.