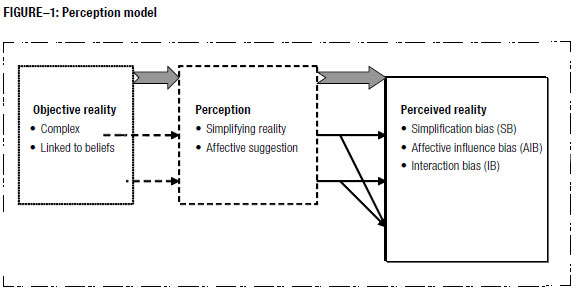

"This research was aimed at gaining a deeper insight into perception linked to environmental uncertainty and the strategic significance of perceptual diversity. Factors intervening in perception were characterised. It is specifically shown that an individual's cognitive limitations and their beliefs' affective influence gave rise to cognitive bias distorting individual perception."

http://socialsciences.scielo.org/scielo.php?pid=S0121-50512008000100004&script=sci_arttext

Augmented food, robotic chefs, food printers, and virtuoso mixers- a look at the digital gastronomy to come:

http://empiricator.com/2010/07/augmented-food-robotic-chefs-food-printers-and-virtuoso-mixers-a-look-at-the-digital-gastronomy-to-come/

"If molecular gastronomy is loosely defined as the science of deliciousness, then perhaps cooking with nostalgia or more generally, cognitive gastronomy can be thought of as the psychology of deliciousness. Within cognitive gastronomy, we could also consider other factors such as how a person’s mood and state of mind affects their enjoyment of food. In the coming weeks, I hope to explore this area further. But in the meantime, take a look at these fascinating articles by Louisa of Movable Feast, and Heston Blumenthal of The Fat Duck."

http://www.alacuisine.org/alacuisine/2006/05/the_secrets_of_.html

"The relationship between food and language around the globe. The vocabulary of food and prepared dishes, and crosslinguistic similarities and differences, historical origins, forms and meanings, and relationship to cultural and social variables. Social and cognitive issues in food advertising and in the language of menus and their historical development and crosslinguistic differences. The cognitive science of taste and food language. The structure of cuisines viewed as meta-languages with their own vocabularies and grammatical structure."

http://www.stanford.edu/class/linguist62n/

"Indeed, inquiry into cognitive science can tell us why we believe what we do (religious or otherwise), often mistakenly, and allow us to adjust for numerous flaws in human reasoning that result in so much cruelty and destruction amid, as Albert Camus put it, the "gentle indifference of the world." Many of Harris's spiritually oriented critics -- conflating science as a monolithic institution with science as a method -- rebuke him for daring to advance purely materialist insights into human values. But he is a neuroscientist; and he knows that we are only just beginning to understand the mysterious workings of the human brain. Why shouldn't he wade into these waters within his field, as priests and philosophers have done before him in theirs? If there are moral ramifications to ongoing neurological findings, is one simply supposed to ignore them?"

http://www.huffingtonpost.com/2010/11/04/sam-harris-how-science-ca_n_779020.html

"As children, for example, we learn to associate objects with sounds. An adult says the word “dog” while pointing at a poodle, and a normal child quickly learns the concept. But it turns out there’s a far more complicated cognitive process at work than many of us suppose, Jackendoff says. And the process is even more mysterious when it comes to learning abstract concepts like ought to, or shouldn’t—what is right or what is wrong.

“Much of culture might be consciously taught—you don’t do this, you don’t marry these people, this is what you should wear—but this is not the way language is usually acquired,” Jackendoff says. “Still, if you try to explain why there is such a thing as morality at all, and how children grasp it, the learning process turns out to be fantastically complicated and not entirely conscious.”

There are deeper parallels between linguistic and moral judgments. Symposium participant John Mikhail, a professor of law at Georgetown University, will build on well-known legal philosopher John Rawls’s proposal that moral judgments are much like linguistic judgments—they come to mind intuitively and you can give reasons for them, but your reasons are not the same as the judgment itself.

“Examining the logical structure behind moral judgments, asking what good it does you or the society for there to be such a thing as morality and exploring the evolutionary roots of morality can help us understand why moral conflicts arise,” says Jackendoff.

But this doesn’t necessarily tell us how to solve such conflicts in real life. Jackendoff says he and Teichman think of the symposium as an experiment in confronting the emerging results from cognitive science with real-world issues in diplomacy, legal practice, security and economics. Their goal, he says, “is to facilitate a lively interchange of ideas, in the hope of building bridges between the very different worlds of cognitive science and policy.”"

http://www.newdesignworld.com/press/story/201961

"Affective Computing is computing that relates to, arises from, or deliberately influences emotion or other affective phenomena.

Emotion is fundamental to human experience, influencing cognition, perception, and everyday tasks such as learning, communication, and even rational decision-making. However, technologists have largely ignored emotion and created an often frustrating experience for people, in part because affect has been misunderstood and hard to measure. Our research develops new technologies and theories that advance basic understanding of affect and its role in human experience. We aim to restore a proper balance between emotion and cognition in the design of technologies for addressing human needs.

Our research has contributed to: (1) Designing new ways for people to communicate affective-cognitive states, especially through creation of novel wearable sensors and new machine learning algorithms that jointly analyze multimodal channels of information; (2) Creating new techniques to assess frustration, stress, and mood indirectly, through natural interaction and conversation; (3) Showing how computers can be more emotionally intelligent, especially responding to a person's frustration in a way that reduces negative feelings; (4) Inventing personal technologies for improving self-awareness of affective state and its selective communication to others; (5) Increasing understanding of how affect influences personal health; and (6) Pioneering studies examining ethical issues in affective computing."

http://affect.media.mit.edu/

Emotional Complexity:

http://tinyurl.com/2uqdbet

The complexity of individual emotional lives : A within-subject analysis of affect structure

"Research on personality and emotion has emphasized individual differences in the amounts of various emotions experienced over time. In this study we take a different approach, one that focuses on individual differences in the structure of affective lives. In particular, we assess the structural complexity of daily affect ratings for each of out subjects, and then relate individual differences in affective complexity to aspects of personality. Affective complexity is defined as the number of within-subject factors needed to account for a given amount of variance in each subjects' daily mood ratings. Out study expands on the pioneering work of Wessman and Ricks (1966) by studying both males and females, employing a broader range of personality variables, and using measures of emotional lifestyle that are independent from the data which are used to generate the affect complexity score. We found that men and women did not differ in terms of mean levels of affect complexity. However, gender differences did emerge in the correlates of affect complexity. For men, affect complexity is associated with general unhappiness, introversion, neuroticism, and higher levels of psychosomatic complaints. These correlations were insignificant for women. For both men and women affect complexity is related to emotional stability and lowered emotional reactivity. The concepts of gender stereotypes, gender role stress, and feeling rules are employed to discuss out results."

http://cat.inist.fr/?aModele=afficheN&cpsidt=3127275

Individual Differences in Emotional Complexity: Their Psychological Implications

"Two studies explored the nature and psychological implications of individual differences in emotional complexity, defined as having emotional experiences that are broad in range and well differentiated. Emotional complexity was predicted to be associated with private self-consciousness, openness to experience, empathic tendencies, cognitive complexity, ability to differentiate among named emotions, range of emotions experienced daily, and interpersonal adaptability."

http://dionysus.psych.wisc.edu/lit/Articles/KangS2004a.pdf

The Neurological Basis for Leader Complexity

"Complex Adaptive Leadership, and its core component of self-complexity, is an emerging conceptualization of leadership that is based on the premise that complex operating environments require leaders to be highly adaptive in adjusting their behavioral responses to meet diverse role demands. Further, such behavioral adaptability is contingent upon leaders having the requisite cognitive and affective complexity to facilitate effectiveness across a wide domain of roles. Leadership researchers have long attempted to understand the individual differences in cognition and affect that underlie leader performance. To this end, we demonstrate that quantitative electroencephalogram (qEEG) technology can provide valuable information about the neural correlates of various cognitive processes underlying leader self-complexity. Thus, the use of qEEG may lead to a better understanding of the latent and dynamic neurological mechanisms that are central to the cognitive affective processing of leaders and their performance. We conclude by considering how additional research could lead to the application of neurofeedback protocols for leadership development."

http://www.brainmappingforsuccess.com/resources/

Effect of positive, negative, and mixed occupational information on cognitive and affective complexity

"A series of concentrated research studies over the past 8 years has significantly demonstrated that cognitive complexity in the vocational realm is positively related to congruence or appropriateness of vocational choice. Moreover, research has shown that introducing occupational information significantly reduces, rather than increases, cognitive complexity. The results of the study reported here relate to changes in cognitive complexity as a function of the type of occupational information introduced, namely, information with respect to the advantages of occupations; the disadvantages of occupations, or a combination of positive and negative features of occupations. Our results clearly demonstrated that while positive occupational information alone leads to greater simplicity, negative or mixed information significantly retards the trend toward greater simplicity. Results are discussed from both theoretical and practical perspectives, especially with reference to the typical occupational information provided in routine vocational counseling."

http://tinyurl.com/395ushy

"Organizational communication is seen from a three-fold perspective: action, relationships, and choice. Organizations must focus on action, and communication plays a pivotal role in organizations, and may even be seen as the foundation

for most organizational action (O’Reilly and Pondy 1979; Weick 1979). Hence, it must be assumed that organizational communication eventually leads to action, although not all communication can, nor should it be, associated directly with a specific action.

In other words, communication is seen as taking action and organizations are seen

as collections of communicative acts (Winograd and Flores 1986). This perspective helps to identify the goals of communication insofar as they relate to different types of action while it also helps to define effective versus poor communication.

Second, organizations may be described as entities engaged in social, as well as economic, exchange (Blau 1964). Since they cannot exist without social communication, action-oriented goals are complemented by the relationship oriented goals of communication.

Third, a communicator will generally choose how to communicate. We use a combination of social and utilitarian values to describe how people choose their communication behavior, including their choice of communication media.

Within the perspective of choice, action, and relationship, we develop a model that has three main factors, each of which includes several elements (shown in Figure 1):

•Inputs to the communication process: (1) task attributes, (2) distance between sender and receiver, and (3) values and norms of communication;

•A communication cognitive-affective process that describes the choice of (1) one or more communication strategies, (2) the form of the message, and (3) the medium through which it is transmitted; and

•The communication impact: (1) the mutual understanding and (2) relationship between the sender and receiver.

Looking back at Table 1, the example demonstrates several communication goals, forms of message, and media. Communication strategies, however, are less obvious. For example, in trying to influence the employees, the CEO takes their perspective in the voice mail about the European takeover. Below we enumerate several other communication strategies and show how they affect the choice of medium and message.

We use extensively the notion of communication complexity to explain the choices of strategies, messages, and media. Communication complexity results from the use of limited resources to ensure successful communication under problematic and uncertain conditions. It grows as the demands of the communication process on mental resources approach their capacity (e.g., Rasmussen 1986). The sources of communication

complexity can be categorized as cognitive complexity, dynamic complexity, and affective complexity.

Cognitive complexity is a function of

(1) the intensity of information exchanged (interdependency) between communicators, which increases the probability of misunderstanding (Straus and McGrath 1994),

(2) the multiplicity of views held by the communicators, which increases the plausibility of understanding the message in a different context than intended (Boland et al. 1994), and

(3) the incompatibility between representation and use of information, which requires the information communicated to be translated before it can be used, and increases the demands on resources and the probability of error (Barber 1988; Norman 1990).

Dynamic complexity refers to how far the communication process depends on time constraints, unclear, or deficient feedback and changes during the process. Dynamic complexity increases the likelihood of misunderstanding the required action (Diehl and Sterman 1995). For example, when the receiver’s behavior is highly unpredictable (e.g., lapses of attention), the communicator needs to adapt the communication process to fit in with the new behavior.

Affective complexity, meanwhile, refers to how far communication is sensitive to attitudes or changes in disposition toward the communication partner or the subject matter. It is typified by relational oriented obstacles such as mistrust and affective disruptions (Salazar 1995)."

http://misq.csom.umn.edu/archivist/bestpaper/teeni.pdf

On the computational complexity of action evaluations

"From the standpoint of computational complexity different ethical positions are analyzed, with the eventual idea of implementing them as actual systems. For each of the action evaluation strategies suggested by hedonism, consquentialsm, and deontologism a satisfaction condition is defined. The time complexity involved in meeting these satisfaction conditions is then analyzed. Hedonism is found to be less computationally complex than consequentialism and deontologism (which are of equivilent complexity classes). In support of these analyses, a literature review discussing questions of computability, ethical computability, and computational complexity is provided. In addition, it is argued that computers need not be ethical in the same manner that humans are ethical."

http://affect.media.mit.edu/pdfs/05.reynolds-cepe.pdf

“Open innovation is the use of purposive inflows and outflows of knowledge to accelerate internal innovation, and expand the markets for external use of innovation, respectively. [This paradigm] assumes that firms can and should use external ideas as well as internal ideas, and internal and external paths to market, as they look to advance their technology.” - Henry Chesbrough

http://www.openinnovation.net/index.html

innovative Collaborative Knowledge Networks (iCKN):

The goal of this research project at the MIT Center for Collective Intelligence is to help organizations to increase knowledge worker productivity and innovation, by creating "Collaborative Innovation Networks (COINs)" .

http://www.ickn.org/

Peter Gloor:

http://cci.mit.edu/pgloor/

Information Integration, Databases and Ontologies:

Although ontologies are promising for certain applications, many difficult problems remain, in part due to the essentially syntactic nature of ontology languages (e.g. OWL), the computationally intractable nature of highly expressive ontology languages (such as KIF), and the difficulty of interoperability among the many existing ontology languages, as well as among the ontologies written in those languages. Difficulties of another kind stem from the unrealistic expectations engendered by the many exaggerated claims made in the literature.

The goal of research in Data, Schema and Ontology Integration and Information Integration in Institutions is to provide a rigorous foundation for information integration that is not tied to any specific representational or logical formalism, by using category theory to achieve independence from any particular choice of representation, and using institutions to achieve independence from any particular choice of logic. The information flow and channel theories of Barwise and Seligman are generalized to any logic by using institutions; following the lead of Kent, this is combined with the formal conceptual analysis of Ganter and Wille, and the lattice of theories approach of Sowa. We also draw on the early categorical general systems theory of Goguen as a further generalization of information flow, and draw on Peirce to support triadic satisfaction.

http://cseweb.ucsd.edu/~goguen/pro

Government especially needs external ideas

NASA has been using prizes to attract external sources of innovation for several years. Not only is it helping attract innovative ideas for manned space flight, but it’s also providing a treasure trove of research information on crowdsourcing for researchers like Karim Lakhani, as this NASA press release Wednesday spelled out:

WASHINGTON -- NASA and Harvard University have established the NASA Tournament Lab (NTL), which will enable software developers to compete with each other to create the best computer code for NASA systems.

…

The lab will be housed at Harvard's Institute for Quantitative Social Science under the direction of Principal Investigator and Harvard Business School Professor Karim R. Lakhani, a leading scholar on distributed innovation and crowdsourcing. London Business School Professor Kevin Boudreau, an expert on platform-based competition, will be the chief economist of the NTL.

Under the NTL initiative, Lakhani and Boudreau also will conduct basic empirical research on the appropriate contest design parameters that yield the most effective solutions in a tournament setting. This will enable the routine use of innovation tournaments as a problem solving approach within NASA and the rest of the public sector. Harvard will collaborate with TopCoder Inc., a company that administers contests in software architecture and development, to manage and conduct the tournaments.

Lakhani and Boudreau have previously worked with challenge implementation companies to launch three experimental competitions using problems from the Harvard Medical School's Clinical and Translational Science Center and NASA's division of Space Life Sciences. Results from the experiments demonstrated the ability to deliver high performing solutions and extend the concept of innovation tournaments to scientific and engineering contexts.

At times during its 50 year existence, NASA was one of the most innovative places in the Federal government (next to DARPA and the NSA). Now that it’s shifting away from running a LEO bus service back to interplanetary exploration, it seems to be recovering its interest in innovation and new ideas.

Wouldn’t it be nice if this approach could be more widely adopted by the government? In a limited way, it is. PatentlyO blogger Dennis Crouch notes that the administration’s CTO last month unveiled a website with 35 challenges and prizes, including:

Create nutritious food that kids like — $12,000 prize.

Reducing waste at college football games — school prestige award.

Best original research paper as judged by the Defense Technical Information Center.

Provide a whitepaper on how to improve reverse osmosis membranes — up to $100,000.

Digital Forensics Challenge

Federal Virtual Worlds Challenge (Create the best virtual world for the US Army) — $25,000 in prizes.

Advance the field of wireless power transmission — $1.1M for a team that can wirelessly drive a mechanical climber to 1 kilometer height at a speed of at least 5 meters/sec.

Strong Tether Challenge — create a material with 50% more tensile strength than anything on the market for $2 million.

The problem is that the challenges — and the site’s mission itself — seem more oriented towards PR victories than solving real problems.

The Challenge.gov website proclaims itself “a place where the public and government can solve problems together,” which is just silly PR spin. A better title would have been “an admission that government doesn’t have all the answers and needs better ideas from an involved citizenry.”

I mean if an innovative company like IBM or Intel or even Apple relies on open innovation to generate new innovative ideas, certainly one of† the largest, most bureaucratic and unresponsive organizations in the world should do so. (And I say this as an employee of one of the most dysfunctional bureaucracies in the developed world — the state of California.)

We’re Number #3! We’re Number #3! As best I can tell, there are 2.7 million Federal civilian employees, vs. 3.9 million in India. I can’t find the comparable number for China, but would assume that it’s comparable to India.

Posted by Joel West at 10:54 AM

Labels: crowdsourcing, innovation policy, Karim Lakhani, NASA

http://blog.openinnovation.net/2010/10/government-especially-needs-external.html

"Open Innovation Institutions as Governance or Selection Mechanisms?

Evidence from a Field Experiment on a NASA Space Life Science Problem

Kevin J. Boudreau (LBS) & Karim R. Lakhani (HBS)

In this paper we present novel field experimental evidence to show that individuals have different preferences for cooperative and competitive institutions for innovation---and these preferences have large, economically significant performance, implications. In the experiment, individuals could choose between a competitive and a cooperative regime to solve a computational-engineering problem faced

by NASA’s Space Life Sciences Directorate. The experiment mimicked “open innovation” institutional regimes where individuals can participate in either competitive or cooperative problem solving with both pecuniary and non-pecuniary reward structures. Here we compare the performance of individuals who chose to be in the competitive regime versus those that were, controlling for their skill level.

First we find that those who were given the choice of institutional regime performed significantly better and exerted more effort than those that were assigned to compete.

Second we find that the presence of pecuniary rewards resulted in better performance and more effort.

Third we find that the impact of choice on performance and effort is equivalent to- and sometimes greater than the impact of pecuniary rewards, showing the importance of selection and sorting for innovation outcomes."

http://www.nasa.gov/centers/johnson/news/releases/2009/J09-028.html

"Karim R. Lakhani is an assistant professor in the Technology and Operations Management Unit at the Harvard Business School. He specializes in the management of technological innovation and product development in firms and communities. His research is on distributed innovation systems and the movement of innovative activity to the edges of organizations and into communities. He has extensively studied the emergence of open source software communities and their unique innovation and product development strategies. He has also investigated how critical knowledge from outside of the organization can be found and put to use inside for innovation in the biotechnology, life sciences and industrial chemicals industries. He is co-editor of Perspectives on Free and Open Source Software (MIT Press, 2005) and co-founder of the MIT-based Open Source research community and web portal."

http://drfd.hbs.edu/fit/public/facultyInfo.do?facInfo=ovr&facId=240491

Benjamin Franklin's Inventions, Discoveries, and Improvements:

http://www.ushistory.org/franklin/info/inventions.htm

Semantic Innovation Management:

Innovation within established industry can be viewed as a cyclic loop consisting of four distinct phases, i.e., recognition, initiation, implementation, and stabilization. Different information technology enabled innovation management tools supporting the lifecycle of innovation are classified as five layers, i.e., individual innovation, project innovation, collaborative innovation, distributed innovation, and semantic innovation. According the fact that the current state is evolving from distributed innovation to semantic innovation, this paper focus on the realization of Semantic Web technologies enabled semantic innovation. To explicitly and formally specify all the different perspectives of innovation related information, a shared ontology is proposed as the common language of innovation management, which describes the critical and minimal information about the innovation process in a holistic way. Then, a technical framework which employs the machine readable innovation ontology to actually improve innovation management inside an organization and among loosely coupled organizations is presented. Finally, some features of the semantic innovation are discussed.

http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.104.6470

Keywords: Innovation ontology, Innovation management, Semantic Web, Goals collaboration

http://bleedingedge.jeromedl.org/preview;jsessionid=783600EB2DE311FCB5E2019D14102554?author=mailto:David.OSullivan@deri.org

Collaborative Ontology Building with Wiki@nt - A Multi-Agent Based Ontology Building Environment:

http://www.cs.iastate.edu/~honavar/Papers/baoeon04.pdf

Mechanism for Collaborative Innovation in Living Labs (Network, Idea, Games, Team):

http://www.esoce.net/YC2007/wks2/01%20-%20Thoben%20Collaborative%20Innovation%20Vision%20Dec%203%20-%202007%20%20final%20[Sola%20lettura].pdf

Kai Fischbach:

Enabling Open Innovation in a World of Ubiquitous Computing - Proposing a Research Agenda (2009):

This article proposes a new Ubiquitous Computing (UC) infrastructure for open access to object data that will come along with a new research agenda especially for the field of Wirtschaftsinformatik. Our guiding hypothesis is that by fostering an “Open Object Information Infrastructure ” new ways for product, process, and business model innovations may emerge. We assume that the unrestricted access to a large amount of sensor network data is the basis for new and often unanticipated innovation. Two major trends fuel our thoughts: Firstly, the ever increasing connectivity among people and more recently things extends present communication and information technology infrastructures. Thereby we get hold of the virtual pendants of an enormous number of physical entities. In addition, advancements in the areas of sensor networks, Auto-ID techniques, including RFID, and in related fields like telecommunications, HCI, and computer science catalyze each other. Secondly, increasing deployment and insight in new business concepts, like open innovation and business ecosystems, show remarkable potential for innovating products, processes and eventually business models. This paper structures the various facets that emerge by the combination of the two trends. Furthermore it reveals how these trends may induce lasting and fundamental changes for business and society. The authors would be delighted if the issues and arguments outlined here will stimulate a discussion on a new, major research agenda for the Wirtschaftsinformatik community.

http://de.scientificcommons.org/50776335

The Bumble Bee: Ken Thompson's Shared Know-How on Team Dynamics, Virtual Collaboration and Bioteaming.

http://www.bioteams.com/

Swarm Intelligence to Swarm Robotics:

http://bit.ly/3m8aO

Swarm Intelligence: Literature Overview:

http://bit.ly/ovgL6

Swarm Intelligence: a New C2 Paradigm with an Application to Control of Swarms of UAVs:

http://www.math.ucla.edu/~shargel/UAV-swarms.pdf

Gerardo Beni:

http://bit.ly/2FUEX6

"Chapter 10: Syntax and Semantics in Languages

“[W]e cannot identify pure syntax with objective, and dismiss all the rest as subjective. A language fractioned from all its referents is perhaps something, but whatever it is, it is neither a language nor a model of a language. …. The main issue, however, is that, just as syntax fails to be the general, with semantics as a special case, so too do the purely predicative presuppositions underlying contemporary physics fail to be general enough to subsume biology as just a special case. This kind of attitude is widely regarded as vitalistic in this context. But it is no more so than the nonformalizability of Number Theory. Stated another way, contemporary physics does not yet provide enough to allow the problems of life to be well-posed.”

Chapter 11: How Universal is a Universal Unfolding?

“On the face of it, however, bifurcation theory should be as much a representation of system generation as of system failure. Indeed, in generating or manufacturing a mechanical structure, such as a bridge, we need the bridge itself to be a bifurcation point, under the very same similarity relation pertaining to failure modes; otherwise we simply could not even build it. The fact that we cannot in general interpret universal unfoldings in terms of generation modes as we can with failure modes raises a number of deep and interesting questions. Obviously, it implies that the universal unfolding is far from universal.”

http://www.panmere.com/?page_id=12

"JR: Now, all anticipatory systems are living systems, is this true?

RR: Well, all that we know about. One of the origins of these ideas I think it might be good to talk about... uh, when I was a student, there was a vaguely defined area, which impinged on my own areas of interest, it was called "bionics", which was the use of biological prototypes to design technology, OK? In fact, one of the approaches that I had to anticipatory systems was just in this bionic line, to use them because we need; I think, as part of our technology, modes of anticipation. We have to look ahead. Say, is the planet getting warmer or is it not? A lot of the problems we face are problems, which are only amenable to anticipatory control. And, in fact, that's what people are doing. What I claimed was, years ago, that what people fought about were models. They fought about what the models told them about the future and what they ought to do about it. They fought over what strategies we should use to try to improve the circumstances in the future. And that required going to more biological modes of control, anticipatory control, more complex system control, uh, than we were used to doing. This was part of what was called "bionics", was to try to use particularly the behavior of organisms to give us guides as to the kind of technologies we needed to invoke to solve the problems which technologies themselves, among other things, were creating for us. In fact, the first chapter, if I remember, of my book on anticipatory systems was sort of the background of my formal work in this area. But it was meant as, at least in part, an example of bionics: the use of biological prototypes to mold our own technologies. And as such it revolved not so much around science as engineering, and the kind of engineering that it involved had to do with what appears in biology as FUNCTION. What a specific organ or piece of an organism does is carry out a function in the larger behavior of the system as a whole.

JR: Well, isn't the notion of function considered to be non-objective? It's one of the things that reductionists don't want to deal with.

RR: Very much so. But as I said, if you want to try to control a behavior, what you're trying to control is a function, or how a function is being expressed. One of the habits of thought that was being imported from physics was that the idea of function was not a scientific notion. If you wanted to make biology scientific, you had to extrude all notions of function. And in fact, you see, in many books on the philosophy of biology, there were attempts to just cast out the notion of function. The worst sin was to use a phrase like; "The function of the heart is to pump blood." There were the most elaborate circumlocutions made to get away from using an expression of that form. It was felt to have no scientific content. Now, a pioneer in the use of the term of function in the biological sense was my teacher, Rashevsky. And, in fact, he separated biology into two aspects; one of which was the structural aspect, which he called "metric", which had to do with more or less the physics of an organism-- the sort of things which he, as a physicist himself, was used to dealing with. But then there was what he called the "functional aspect" or the "relational aspect", which he felt was equally important. In fact, MORE important. But this was one of the reasons that Rashevsky was derided, was because he insisted on this notion of biological function and the way of incorporating it into science, relating it to structure."

http://www.people.vcu.edu/~mikuleck/rsntpe.html

"Through telic feedback, a system retroactively self-configures by reflexively applying a “generalized utility function” to its internal existential potential or possible futures. In effect, the system brings itself into existence as a means of atemporal communication between its past and future whereby law and state, syntax and informational content, generate and refine each other across time to maximize total systemic self-utility. This defines a situation in which the true temporal identity of the system is a distributed point of temporal equilibrium that is both between and inclusive of past and future. In this sense, the system is timeless or atemporal.

A system that evolves by means of telic recursion – and ultimately, every system must either be, or be embedded in, such a system as a condition of existence – is not merely computational, but protocomputational. That is, its primary level of processing configures its secondary (computational and informational) level of processing by telic recursion. Telic recursion can be regarded as the self-determinative mechanism of not only cosmogony, but a natural, scientific form of teleology.

...

Where emergent properties are merely latent properties of the teleo-syntactic medium of emergence, the mysteries of emergent phenomena are reduced to just two: how are emergent properties anticipated in the syntactic structure of their medium of emergence, and why are they not expressed except under specific conditions involving (e.g.) degree of systemic complexity?"

http://www.megafoundation.org/CTMU/Articles/Langan_CTMU_092902.pdf

Constraint Recognition, Modeling, and Visualization in Network-Based Command and Control:

...

"In cybernetics, constraints are recognized to play a significant role in process control. One of the fundamental principles of control and regulation in cybernetics is the Law of Requisite Variety (Ashby, 1956). This law states that a controller of a process needs to have at least as much variety (behavioral diversity) as the controlled process. Constraints in cybernetics are described as limits on variety.

Constraints can occur on both the variety of the process to be controlled and the variety of the controller. This means that if a specific constraint limits the variety of a process, less variety is required of the controller. If a specific constraint limits the variety of the controller, less variety of the process can be met when trying to exercise control. Regarding the prediction of future behavior, Ashby notes that if a system is predictable, then there are constraints that the system adheres to. Knowing about constraints on variety makes it possible to anticipate future behavior of the controlled process, and thereby facilitates control.

The recognition of constraints on action has been observed in field studies as a strategy to cope with complexity in socio-technical joint cognitive systems. Example domains include refinery process control (De Jong & Köster, 1974), ship navigation (Hutchins, 1991a, 1991b), air traffic control (Chapman et al., 2001; K. Smith, 2001), and trading in the spot currency markets (K. Smith, 1997). Similarities of system complexity and coupling in these domains and command and control suggest that the recognition of constraints is likely a useful approach in command and control as well.

Woods (1986) emphasizes the importance of spatial representations when providing support to controllers. In this paper we develop the visualization of constraints as the support strategy. Representation design (Woods, 1995) and ecological interface design (Vicente & Rasmussen, 1992), offer design guidelines concerned with constraint: Decision support systems should facilitate the discovery of constraints, represent constraints in a way that makes the possibilities for action and resolution evident, and highlight the time-dependency of constraints. One representation scheme for such discovery is the state space.

State space representation is a graphic method for representing the change in state of process variables over time (Ashby, 1960; Flach et al., 2003; Knecht & Smith, 2001; Port & Van Gelder, 1995; Stappers & Flach, 2004). The variables are represented by the axes. States are defined by points in the space. Time is represented implicitly by the traces of states through the space. Figure 1 is an example state space in which the variables are fuel range and distance. The regions with different colors represent alternative opportunities for action. The lines between these regions are constraints on those actions. The arrows represent traces of states of a pair of processes through the space as their states change over time. The opportunities for action have yet to change for the first process, but they have changed for the second."

http://www.dodccrp.org/events/11th_ICCRTS/html/papers/152.pdf

""Where reality is characterized by dual-aspect infocognitive monism (read on), it consists of units of infocognition reflecting a distributed coupling of transductive syntax and informational content. Conspansion describes the “alternation” of these units between the dual (generalized-cognitive and informational) aspects of reality, and thus between syntax and state. This alternation, which permits localized mutual refinements of cognitive syntax and informational state, is essential to an evolutionary process called telic recursion. Telic recursion requires a further principle based on conspansive duality, the Extended Superposition Principle, according to which operators can be simultaneously acquired by multiple telons, or spatiotemporally-extensive syntax-state relationships implicating generic operators in potential events and opportunistically guiding their decoherence.

Note that conspansion explains the “arrow of time” in the sense that it is not symmetric under reversal. On the other hand, the conspansive nesting of atemporal events puts all of time in “simultaneous self-contact” without compromising ordinality. Conspansive duality can be viewed as the consequence of a type of gauge (measure) symmetry by which only the relative dimensions of the universe and its contents are important."

...

"So for both the 2D disk and the 3D tetrahedron, the boundary of the boundary is 0. While physicists often use this rule to explain the conservation of energy-momentum (or as Wheeler calls it, “momenergy”20), it can be more generally interpreted with respect to information and constraint, or state and syntax. That is, the boundary is analogous to a constraint which separates an interior attribute satisfying the constraint from a complementary exterior attribute, thus creating an informational distinction." -Pg. 10, PCID 2002, Langan

http://www.megafoundation.org/CTMU/Articles/Langan_CTMU_092902.pdf

"Symmetry of subject and predicate

This definition can be reconciled with the short form definition above by taking A to be a subset of KX, namely by representing the predicate a as the function λx.r(x,a): X→K mapping each individual to the value of the predicate on that individual, and taking A to be the set all such functions from X to K. In this case there is no separate matrix, just the two sets consisting of respectively individuals and predicates. However this breaks the symmetry of subject and predicate. The more symmetric matrix encoding of this information makes it equally reasonable to view X as a subset of KA when this is helpful, bearing in mind however that the matrix may contain repeated rows and/or columns, i.e. in neither case need these be extensional subsets of KX or KA.

A more satisfactory reconciliation with the short form definition is to view the subject-predicate relationship as a symmetrically expressed relation r. We do not have to interpret the notion of predicate on a set X of subjects as a function a:X→K, any more than we have to interpret a subject as a function x:A→K. Instead we have three options: either of those two, or just leaving r as the symmetric expression of the relationship. Unlike both algebras and topological spaces, Chu spaces do not take subjects to be primitive and predicates to be derived, but rather take both to be primitive.

In this symmetric view, "subject" is more natural than "individual."' One envisages an individual as having an independent existence. A subject on the other hand is a subject of something: it forms one half of an elementary proposition r(x,a) that combines a subject x with a predicate a.

This reconciliation has a historical link with the discovery and resolution of the paradoxes of Cartesian Dualism. If we identify the sets X and A with Descartes' 1647 division of the universe into physical and mental components respectively, then r is the mediator of these components sought by many philosophers during the following century. The respective proposals of Hume and Berkeley to make one side or the other primitive correspond to taking respectively X or A to be primitive and deriving the other in terms of functions from the former to K. That Hume won out would seem to be correlated with mathematics' preference for basing mathematical objects on their constituent individuals rather than their constituent predicates."

http://chu.stanford.edu/

Formal Theories of Information:

http://books.google.com/books?id=L82LP_AJcjwC&dq=Formal+theories+of+information&output=html_text&source=gbs_navlinks_s

State Spaces, Local Logics, and Non-Monotonicity:

A bumper sticker recently in vogue advocated that one should "Think Globally, act locally". While the sentiment is admirable, if taken literally the injunction is impossible to follow. Thinking and reasoning are inherently local, located in space and time, always taking place against prevailing background conditions. We are reliant on these background conditions being certain ways, ways that seem to be almost hard-wired.

http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.57.3367&rep=rep1&type=pdf

Algebraic Models of Reasoning:

...

"A few examples for a successful application of algebraic methods are [4], [8], [18], [30] in artificial intelligence and [23] and [19] in cognitive science where those methods are successfully applied to reasoning and cognition.

Most of the current symbolic AI approaches use more or less logical formalisms for reasoning. These formalisms have a long history based on the fact that logical techniques like resolution were quite successful for deductive inferences like in theorem proving applications. But it turns out that non-deductive inference mechanisms like analogical reasoning and inductive reasoning as well as non-classical inference schemes like non-monotonic reasoning and qualitative reasoning display perennial problems for logical accounts. We think that algebraic approaches can provide a resort for these problems.

There is no simple relation between logic and algebra: the models for many types of logic are usually algebraic in nature (for example, Boolean algebras as models for classical logic, Heyting algebras as models for intuitionism, or deMorgan algebras as models for relevance logic) but on the other hand algebras alone are hard to use as a tool for inference mechanisms. Logics have a rich history of applications in AI ranging from planning (situation calculus) to theorem proving (resolution) and from knowledge representation (temporal reasoning, belief revision) to the dynamic update of multi-agent systems whereas algebraic accounts are rarely used for deductions. But for the broad (and difficult) area of non-classical and non-deductive reasoning algebras are tools in their own right.

The paper has the following structure: In modern approaches in theoretical computer science and the foundations of artificial intelligence, category theory has been playing an important role. Starting from category theory we will provide roughly the idea of Chu spaces and closure resp. topological spaces. We will continue by presenting formal concept analysis and channel theory that both have strong connections to category theory and Chu spaces. Going further to the classical algebraic theories we will discuss single- and many-sorted algebras and their applications in cognitive modeling. We will finish this overview with some remarks concerning coalgebras and conclusions. We think that the presented compact and comprehensive presentation of fundamental algebraic concepts will be helpful for a wide audience of researchers who are not so familiar with these algebraic techniques, in order to get an idea of their usefulness."

http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.91.7389&rep=rep1&type=pdf

Center for the Study of Language and Information (researchers):

http://www-csli.stanford.edu/people/researchers.shtml

Artificial Intelligence Center @ SRI:

http://www.ai.sri.com/

John Perry:

http://www-csli.stanford.edu/~jperry//

Computational Semantics Laboratory:

http://godel.stanford.edu/twiki/bin/view/Public/WebHome

"Game theory pragmatics: This is a term I coined, by analogy with pragmatics in linguistics. Our work includes experimental work such as our work on computational pool games ('pool' as in billiards or 8-ball), and formal models of computational limitations in games.

The Intelligent Avatar: Turning passive virtual world puppets into active, intelligent entities.

Formal and useful models of motivational mental attitudes: Informational relations between agents and propositions - notions such as knowledge, certainty and belief are well studies in AI, philosophy, game theory, and many others.

In comparison, motivational relations - notions such as goal, desire, intention, want, and others - are much less well studied. We are after formal models - whether logical, Bayesian, or other - that are rigorous, intuitive, and useful."

http://robotics.stanford.edu/~shoham/

"My research interests revolve around computational learning and discovery, especially their role in computational biology, scientific data analysis, adaptive user interfaces, and cognitive architectures for intelligent agents."

http://cll.stanford.edu/~langley/

"Professor Winograd's focus is on human-computer interaction design and the design of technologies for development. He directs the teaching programs and HCI research in the Stanford Human-Computer Interaction Group. He is also a founding faculty member of the Hasso Plattner Institute of Design at Stanford (the "d.school") and on the faculty of the Center on Democracy, Development, and the Rule of Law (CDDRL)

Winograd was a founding member and past president of Computer Professionals for Social Responsibility. He is on a number of journal editorial boards, including Human Computer Interaction, ACM Transactions on Computer Human Interaction, and Informatica"

http://hci.stanford.edu/~winograd/

Edward N. Zalta is a Senior Research Scholar at the Center for the Study of Language and Information (CSLI). His research specialties include:

Metaphysics and Epistemology

Philosophy of Logic/Philosophy of Logic

Philosophy of Language/Intensional Logic

Philosophy of Mathematics

Philosophy of Mind/Intentionality

http://mally.stanford.edu/zalta.html

"Agent decision-making architectures and their evaluation, including: cognitive models; knowledge representation; logics for agency; ontological reasoning; planning (single and multi-agent); reasoning (single and multi-agent)

Cooperation and teamwork, including: distributed problem solving; human-robot/agent interaction; multi-user/multi-virtual-agent interaction; coalition formation; coordination

Agent communication languages, including: their semantics, pragmatics, and implementation; agent communication protocols and conversations; agent commitments; speech act theory

Ontologies for agent systems, agents and the semantic web, agents and semantic web services, Grid-based systems, and service-oriented computing

Agent societies and societal issues, including: artificial social systems; environments, organizations and institutions; ethical and legal issues; privacy, safety and security; trust, reliability and reputation

Agent-based system development, including: agent development techniques, tools and environments; agent programming languages; agent specification or validation languages

Agent-based simulation, including: emergent behavior; participatory simulation; simulation techniques, tools and environments; social simulation

Agreement technologies, including: argumentation; collective decision making; judgment aggregation and belief merging; negotiation; norms

Economic paradigms, including: auction and mechanism design; bargaining and negotiation; economically-motivated agents; game theory (cooperative and non-cooperative); social choice and voting

Learning agents, including: computational architectures for learning agents; evolution, adaptation; multi-agent learning.

Robotic agents, including: integrated perception, cognition, and action; cognitive robotics; robot planning (including action and motion planning); multi-robot systems.

Virtual agents, including: agents in games and virtual environments; companion and coaching agents; modeling personality, emotions; multimodal interaction; verbal and non-verbal expressiveness

Significant, novel applications of agent technology

Comprehensive reviews and authoritative tutorials of research and practice in agent systems

Comprehensive and authoritative reviews of books dealing with agents and multi-agent systems.

Related subjects » Artificial Intelligence - Communication Networks - HCI - Software Engineering

IMPACT FACTOR: 1.51 (2009) *

Rank 12 of 53 in subject category Automation & control systems"

http://www.springer.com/computer/ai/journal/10458

Reductive - Holistic Cycle: A Model for the Study of Didactic Procedure

"Thus, the problem of reductionism becomes a matter of connecting those conceptual categories. Here the solution is given by category theory, which links categories via functors. Therefore, category theory represents a valuable tool in the case of epistemological problems too, as i.e. with reality levels and reductionism and therelations between scientific and especially empirical theories."

http://tinyurl.com/258weae

"Consider an intelligent system S being able to understand any description of the structure of another one T by means of a language L. With this assumption, after knowing the structure of T, the system S can perform any action which T can do. To see this fact, consider that any human who can understand the meaning of each statement in any programming language can carry out every algorithm that a computer can do.

In addition, if S can get at the structure of T, by observing a sample D from the behavior of T, then the capability of S must be greater or equal to the one of T; because knowing the structure of T implies to know also its working way. Thus, under such a circumstance we can write T <= S; where obviously <= stands for an ordering induced by the intelligence-capability of the involved systems.

Now, suppose that a system M possesses a maximal intelligence-capability. In this case, for every intelligent system S the relation M <= S implies M = S. Recall that the relation M <= S means that S can get at the structure of M by observing samples of its behavior; consequently M = S implies that M can also know and describe its proper structure. Summarizing, a "necessary condition for intelligence maximality of a system M consists of being able to get at its proper structure".

Of course, this is a necessary maximality condition, but not a sufficient one. Intelligence capability is an open quality increasing through experience. However, who lacks of such a necessary maximality condition is constrained by an obvious limitation, and this is a good reason to investigate the underlying algebraic structure in our proper intelligence. This is the main aim of this research group I am opening now. Indeed it is not computer science but it is related to. In fact it is the support of every science, since to understand any human creation the best results can be obtained knowing the structure of the working device, that is, the human intelligence. This is why the title of this group includes the adjective “natural” instead of the artificial one."

http://www.researchgate.net/group/Algebraic_structure_of_natural_intelligence/

"In mathematics, computer science and economics, optimization, or mathematical programming, refers to choosing the best element from some set of available alternatives.

In the simplest case, this means solving problems in which one seeks to minimize or maximize a real function by systematically choosing the values of real or integer variables from within an allowed set. This formulation, using a scalar, real-valued objective function, is probably the simplest example; the generalization of optimization theory and techniques to other formulations comprises a large area of applied mathematics. More generally, it means finding "best available" values of some objective function given a defined domain, including a variety of different types of objective functions and different types of domains."

http://wapedia.mobi/en/Optimization_(mathematics)

"The book is dedicated to multi-objective methods in decision making. One half of the book is devoted to theoretical aspects, covering a broad range of multi-objective methods such as multiple linear programming, fuzzy goal programming, data envelopment analysis, game theory, and dynamic programming. Readers interested in practical applications will find in the remaining parts, a variety of approaches applied in numerous fields; including production planning, logistics, marketing, and finance."

http://books.google.com/books?id=oXYVBZQDye4C&output=html_text&source=gbs_navlinks_s

"The objective of global optimization is to find the globally best solution of (possibly nonlinear) models, in the (possible or known) presence of multiple local optima. Formally, global optimization seeks global solution(s) of a constrained optimization model. Nonlinear models are ubiquitous in many applications, e.g., in advanced engineering design, biotechnology, data analysis, environmental management, financial planning, process control, risk management, scientific modeling, and others. Their solution often requires a global search approach.

A few application examples include acoustics equipment design, cancer therapy planning, chemical process modeling, data analysis, classification and visualization, economic and financial forecasting, environmental risk assessment and management, industrial product design, laser equipment design, model fitting to data (calibration), optimization in numerical mathematics, optimal operation of "closed" (confidential) engineering or other systems, packing and other object arrangement problems, portfolio management, potential energy models in computational physics and chemistry, process control, robot design and manipulations, systems of nonlinear equations and inequalities, and waste water treatment systems management."

http://mathworld.wolfram.com/GlobalOptimization.html

"Constraint satisfaction/optimization is a powerful paradigm for solving numerous practical problems like planning, scheduling and resource allocation. There exists a large body of work tackling such problems in a centralized setting, with centralized algorithms. However, many real problems are naturally distributed between a set of agents, each one holding its own subproblem, which has interdependencies with (some of) its peers' subproblems. The Distributed Constraint Optimization Problem (DCOP) is a framework that has thus recently emerged as a promising approach to addressing complex coordination problems in Multiagent Systems.

In DCOP, the agents communicate through message exchange to find the optimal solution to the overall optimization problem. Key issues are efficiency (minimizing communication and memory requirements), coping with system dynamics (problems can change at runtime), privacy (leaking as little private constraints and valuations as possible) and incentives (designing algorithms that ensure honest behavior from self-interested agents)."

http://liawww.epfl.ch/People/apetcu/DCRtutorial/

ITERATIVE CONSTRAINED OPTIMIZATION FOR FLEXIBLE CLASSIFIER DESIGN WITH MULTIPLE COMPETING OBJECTIVES

http://www.icsi.berkeley.edu/~sibel/MLSP2007ICO.pdf

CDGO 2007: 2nd International Conference on Complementarity, Duality and Global Optimization in Science and Engineering

http://www.ise.ufl.edu/cao/CDGO2007/ProgramCDGO2007.pdf

Evolutionary Techniques for Constrained Optimization Problems:

http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.40.931&rep=rep1&type=pdf

– Fundamental understanding of the interplay and common underlying science among social/cognitive, information,and communications networks

– Determination of how processes and parameters in one network affect and are affected by those in other networks

– Prediction, design, and control of the individual andcomposite behavior of these complex interacting networks

http://www.ns-cta.org/ns-cta-blog/wp-content/uploads/2010/08/January.pdf

The definition of Intelligence I am interested in is the amount of global topological structure functionally represented by a local algebra.

http://www.google.com/Top/Computers/Artificial_Intelligence/People/

Category Theory and Consciousness:

We propose a new research program which provides an approach to the problem of consciousness and deep reality. Our model is based on category theory. The need to relate local behavior to global behavior has convinced us early on that a good model for conscious entities had to be found in the notion of a sheaf. With this formulation, every presheaf represents a brain (or conscious activity). An important aspect of the theory we we will develop is the notion of sheafification of a presheaf which will allow us to include complementarity as part of the description of the universe. Our model is intended to describe a notion of consciousness which is pervasive throughout the universe and not localized in individual conscious entities.

...

In other words, we needed to provide a model which shows that "objects" which are locally trivial do not necessarily remain trivial at the global level. In our paper we do exactly this through the introduction of the new concept of consheaf (consciousness sheaf), a concept intermediate between presheaf and sheaf.

http://tinyurl.com/2443422

CATEGORIES, PRESHEAVES, SHEAVES AND COHOMOLOGIES FOR THE THEORY OF CONSCIOUSNESS

An analysis with commentaries on a theory of consciousness, developed recently by GoroKato is presented. The main pillars of Kato’s theory are a reference category and a target categorywith presheaves that are functors with special properties, between them. The reference category is thegeneralized time category; the target category contains thoughts and even physical structures. Thepresheaves are targeting local thoughts, and the coresponding sheaves form global thoughts fromlocal thoughts. The cohomology defined on a sequence of objects in the target category, every objectrepresenting a conscious entity (or a person) shows the position and the links of a person(consciousness) in a network of persons (consciousness).

This paper, not being addressed to professional mathematicians, but to those working inconsciousness science, psychology, science of information, physics, etc., offers the necessary notionsof presheaves, sheaves and cohomology as a general introduction to them, in order to follow the ideasand concepts of the theory of consciousness based thereupon.

At the same time some considerations on the theoretical construction of Kato are presented. For instance, the reference category of the generalized time might indeed be used at the level of a universe,looking from the universe inside it or toward the deep existence of reality. If the reference category werein the deep existence, that has no time, looking from the deepest strata of reality toward the universesand consciousness, y compris the fundamental consciousness of existence, the generalized timecategory would have to be replaced by another one (perhaps with a form of cronos, without duration).

It is shown that perhaps, at least in some cases, it would be possible to work only in the frameof categories with functors among them (of the type of Kato’s target category) and using also thecohomology theory. It will remain to be seen if the presheaves and sheaves would be useful in thiscase. Kato’s frame and the above mentioned frame are two possibilities, but in both frames thecohomology might have the same role.

Kato’s theory is thought provoking and for both frames mentioned above, an important aspectwill be a connection with the phenomenological and structural-phenomenological categories andfunctors described by, until now, another line of thought that uses categories and functors forconsciousness and also for physical processes. It seems that such a connection is indeed possible andwill be tried in further works.

http://noesis.racai.ro/Noesis2002/2002Art05.pdf

PRODUCTS OF PHENOMENOLOGICAL CATEGORIES AND PRODUCTS OF PHENOMENOLOGICAL FUNCTORS:

http://noesis.racai.ro/Noesis2003/2003Art02.pdf

Community and Social Factors for the Integrative Science:

http://www.racai.ro/~dragam/COMMUNITY.pdf

An Overview of Academician Mihai Drăgănescu's Conceptual Contributions to Information Science

http://sic.ici.ro/sic2009_4/art12.php

Category Theory as the Language of Consciousness:

http://www.mindspring.com/~r.amoroso/Amoroso24.pdf

Cosmos and Quantum: Frontiers for the Future

"Modern quantum theory has opened the door to a profoundly new vision of the cosmos, where the observer, the observed and the act of observation are fundamental and interlocked. No more is the universe to be studied as a mechanical conglomerate of parts. The interconnectedness of everything is particularly evident in the non-local interactions of the quantum universe. As such, the very large and the very small are also interconnected. In the present work, we look at several levels in the universe, where quantum theory may play an important role. We also look at the implications for a new, radically different view of the cosmos."

http://journalofcosmology.com/QuantumConsciousness108.html

Planck Scale Physics, Pregeometry and the Notion of Time

"Recent progress in quantum gravity and string theory has raised interest among scientists to whether or not nature behaves discretely at the Planck scale. There are two attitudes twoards this discretenes i.e. top-down and bottom-up approach. We have followed up the bottom-up approach. Here we have tried to describe how macroscopic space-time or its underlying mesoscopic substratum emerges from a more fundamental concept. The very concept of space-time, causality may not be valid beyond Planck scale. We have introduced the concept of generalised time within the framework of Sheaf Cohomology where the physical time emrges around and above Planck scale. The possible physical amd metaphysical implications are discussed."

http://arxiv.org/abs/gr-qc/0311012

"One of the main principles is the belief that the only way to an improved Self is through study (see Mussar movement and Jewish ethics). The major works for this neo-gnostic philosophy are derived from the fundamental syllabus of Judaism. As such, the major source is the Torah and especially in its synthesis, the Talmud. The canon of Jewish theosophy is open, that is to say, the source material can constantly be added to or updated by the group or individual. Material can be derived from other Jewish sources, such as the writings of Jewish Kalam and the Zohar of Jewish mysticism (i.e. kabbalah), or even non-Jewish sources, such as the Sufism of Islam or the Yoga of Hinduism, and classic Hellenistic philosophy of the Platonists and Stoics. Some of these concepts are encapsulated in the works of E. P. Sanders and in the "New Perspective on Paul", through the early works of Philo and his Hellenistic Judaism.

Another tenet of Jewish theosophy is that although God knows all thoughts, decisions and actions of the individual, present and future, the individual does have free will to think and do. This fundamental allows for self-improvement, for the want and good of God and not necessarily for the good of the individual."

http://en.wikipedia.org/wiki/Jewish_theosophy

The Cabala.--By Cabala we understand that system of religious philosophy, or more properly, of Jewish theosophy, which played so important a part in the theological and exegetical literature of both Jews and Christians ever since the Middle Ages.

The Hebrew word Cabala (from Kibbel) properly denotes "reception," then "a doctrine received by oral tradition."

http://www.sacred-texts.com/jud/cab/cab03.htm

"After the year A.D. 415, these theories did not continue to develop in Alexandria and the principal subject for research and study was theology, while the paganism passed away with the art of science. In the year A.D. 529, all the schools in Athens were closed according to an order of the Byzantine emperor Justinian I, thus ending one of the most brilliant periods in the development of mathematics and science.

The philosophy and many theories on the Pythagorean way of life, transmitted orally by Pythagoras, were considerably influenced by the way of life of Judaism and the Bible, which was the only source explicitely prescribing the order. King Solomon lived about 400 years before Pythagoras. After the destruction of the First Temple of Jerusalem (586 B.C., before Pythagoras was born), under foreign and hostile rule, Jews gathered in regional schools. The five-pointed star (not six!) refered in Judaism "Solomon's Seal" and by the Greeks "pentagrama", was, in addition to the triangle, one of the symbols of the Pythagoreans. As known, the most ancient source of the pentagrama found by archeologists is Jewish. There is even a presumption that it was the symbol of the Jews before the six-pointed star "Shield of David".

After the destruction of the Second Temple of Jerusalem (332 B.C.), many Jews chased from their land escaped to Egypt and became involved in the instructional material and methods of the Pythagoreans, and especially of the "Alexandrian Pythagoreans". Jewish leaders and intellectuals as Artapanos, Philon the Alexandrian, Josephus Flavius, the Hasmoneans, Johanan Hurcanus, Alexander Jannean, Hanoch, Hillel, Johanan ben-Zakkai - the founder of the famous school at Yavneh, and others were central figures of this "spiritual-scientific" development. The esoteric Jewish theosophy (religious mystic philosophy) started to develop at about this time. In the absence of a central spiritual leadership under foreign and hostile rule, some regional Jewish schools developed into kinds of sects as the Essenes, Nazarenes, Pharisees, and Sadducees.

The Jewish theosophy (Cabala) reached its peak about the 12th and 13th centuries causing the awakening in the development of science and mysticism. Indeed, in the 11th and 12th centuries, the greatest contribution to science was made by the Jewish scholars, especially in Spain. Names known from religious sources as "Rabbis" and "Cabalists", are known in history books of mathematics and science as outstanding inventors and developers.

Rabbi Abraham bar-Chiya (known in science books as Savasorda) of Barcelona, who wrote and published in Hebrew the encyclopedia entitled "Source of Intelligence and Tower of Belief" and the book entitled "Liber Embadorum" treating about geometry and arithmetic theories. His books were translated into Latin and German and used as a source for other mathematicians.

Rabbi Abraham ben-Ezra of Toledo published his famous mathematic books in Hebrew "Book of Unity", "Book of Number", and "Stratagem". In his books he refers to two Jewish researchers who published books in the 10th century: A judge named Hazan from an unknown source and a physicist named Yehuda ben-Rakufial.

Maimonides of Cordoba, the reknown philosopher, physicist, astronomer, and physician, is the greatest Jewish religious author (Rabenu Moshe bar-Maimon -- RaMBaM) since Moses. Other contributors are Johannes Hispalensis of Seville; Samuel ben-Abbas; an unknown English Jew who wrote a book entitled "Mathematum Rudimenta Quaedam"; Moses ben-Tibbon and Jacob ben-Machir, members of the celebrated Tibbon family of Toledo, while the latter published continuation work to Euclid and Menelaus; Jehuda ben-Salomon Kohen of Toledo that developed Euclid's work; and Isaac ben-Sid of Toledo.

The leading Jewish mathematician of the 14th century was Rabbi Levi ben-Gerson (RaLBaG) of Catalonia, but more commonly known in science books as Master Leo de Balneolis. His book "Work of the Computer" in Hebrew was translated into Latin under the title "De Numeris Harmonicis". Isaac ben-Joseph Israeli, a mathematician and astronomer, wrote in Hebrew the book "Fundamentum Mundi" that was translated into Latin and German; Joseph ben-Wakkar of Seville, Jacob Poel of Perpignan, Imanuel Bonfils, Jacob Carsono of Seville, Isaac Zaddik; Calonymos ben-Calonymos of Arles, known as Master Calo, whose works include a paraphrase of Nicomachus; Jacob Caphanton of Castile, a physician with his book on arithmetics; Jehuda Verga, Levinus Hulsius, and Cornelius de Judeis of Nuernberg.

At this stage, this precious scientific work was stopped by the Inquisition, who saw dealing with science as mystic and as witchcraft, and expelled the Jews from Spain in 1492.

Among the Jews who emigrated to the Ottoman Empire, the Sultan Mehmet II, the conqueror of Constantinople, appointed Rabbi Moses Kapsali as Chief Rabbi (Haham-Bashi) over all the Empire. His successor in office Elia Misrachi wrote books on mathematics which were translated into German in Basle. He seems to have been the first to treat of finding the sum of the cubes of the first n natural numbers and to claim that the fundamental arithmetic operations are three: Addition, subtraction, and division, while he includes multiplication into addition. Their study was based on a work by Rabbi Abraham ben-Ezra, Euclid, and Ptolemy.

As a conclusion, the Pythagorean brotherhood was one of the world's earliest unpriestly cooperative scientific societies, if not the first, and that its members invented the "Multiplication Table" and raised important scientific problems which were solved only 1500 years later. On the tombs of some of them, one could find carved geometric tools as quadrant, square, cubit, and level."

http://web.mit.edu/dryfoo/Masonry/Essays/pythagoras.html

The One Mind Model of Quantum Reality: Whitehead, God, and Theories of Mind, Evolution, and Cosmology

"The genesis of actuality from potentiality, with its apparent role of the observer, is an important and unsolved problem which essentially defines science’s view of reality in a variety of contexts. The problem of the observer, viewed in the context of the Whiteheadean doctrine of panexperientialism, is solved when experience is viewed as a fundamental property of nature. Observation then becomes lawful and not emergent.

Panentheism is needed to provide a mechanism for order outside of blind efficient causality, in a Universal final causality. The One Mind Model of quantum reality follows from the notion of a Universal observer, with the One Mind identified as the Mind of God as well as the Self, which is the source of individual human mentality. The One Mind model gives us a single Universe, originating and evolving through a unitary process that solves the problem of the genesis of the actual from the possible. Our scientific models of the mind, consciousness, cosmology, and evolution lack explanatory power, and demand the Whiteheadean concepts of mentality, final causality, panexperientialism, and panentheism. The quantum vacuum, which is the ultimate subtext of physical reality, is described as a means of bringing these concepts into mainstream science."

http://www.goertzel.org/dynapsyc/OneMindPaper.pdf

"You cannot shelter theology from science, or science from theology; nor can you shelter either from metaphysics, or metaphysics from either of them. There is no short cut to truth." Alfred North Whitehead (RM 79)

"God made religion and science to be the measure, as it were, of our understanding. Take heed that you neglect not such a wonderful power. Weigh all things in this balance.

To him who has the power of comprehension religion is like an open book, but how can it be possible for a man devoid of reason and intellectuality to understand the Divine Realities of God?

Put all your beliefs into harmony with science; there can be no opposition, for truth is one. When religion, shorn of its superstitions, traditions, and unintelligent dogmas, shows its conformity with science, then will there be a great unifying, cleansing force in the world which will sweep before it all wars, disagreements, discords and struggles - and then will mankind be united in the power of the Love of God."

...

"The virtues of humanity are many but science is the most noble of them all. The distinction which man enjoys above and beyond the station of the animal is due to this paramount virtue. It is a bestowal of God; it is not material, it is divine. Science is an effulgence of the Sun of Reality, the power of investigating and discovering the verities of the universe, the means by which man finds a pathway to God. All the powers and attributes of man are human and hereditary in origin, outcomes of nature's processes, except the intellect, which is supernatural. Through intellectual and intelligent inquiry science is the discoverer of all things. It unites present and past, reveals the history of bygone nations and events, and confers upon man today the essence of all human knowledge and attainment throughout the ages. By intellectual processes and logical deductions of reason, this super-power in man can penetrate the mysteries of the future and anticipate its happenings.

Science is the first emanation from God toward man. All created beings embody the potentiality of material perfection, but the power of intellectual investigation and scientific acquisition is a higher virtue specialized to man alone. Other beings and organisms are deprived of this potentiality and attainment. God has created or deposited this love of reality in man. The development and progress of a nation is according to the measure and degree of that nation's scientific attainments. Through this means, its greatness is continually increased and day by day the welfare and prosperity of its people are assured."

...

. . .the Religion of God is the promoter of truth, the founder of science and knowledge, it is full of goodwill for learned men; it is the civilizer of mankind, the discoverer of the secrets of nature, and the enlightener of the horizons of the world. Consequently, how can it be said to oppose knowledge? God forbid! Nay, for God, knowledge is the most glorious gift of man and the most noble of human perfections. To oppose knowledge is ignorant, and he who detests knowledge and science is not a man, but rather an animal without intelligence. For knowledge is light, life, felicity, perfection, beauty and the means of approaching the Threshold of Unity. It is the honor and glory of the world of humanity, and the greatest bounty of God. Knowledge is identical with guidance, and ignorance is real error.